by James Wallace Harris, 3/26/26

By 2030, I expect to see robots for sale for $15,000 that will be as flexible as a Tai Chi master, stronger than an Olympian athlete, handier than a master plumber, and smarter than a college professor. These robots won’t have super-intelligence or self-awareness, because selling such beings would be unethical. Nor will they look human, although there will be another industry working to create human-looking androids that some people will buy for sex, but I don’t want to deal with that in this essay. I will predict that we’ll never create an android that can pass for human.

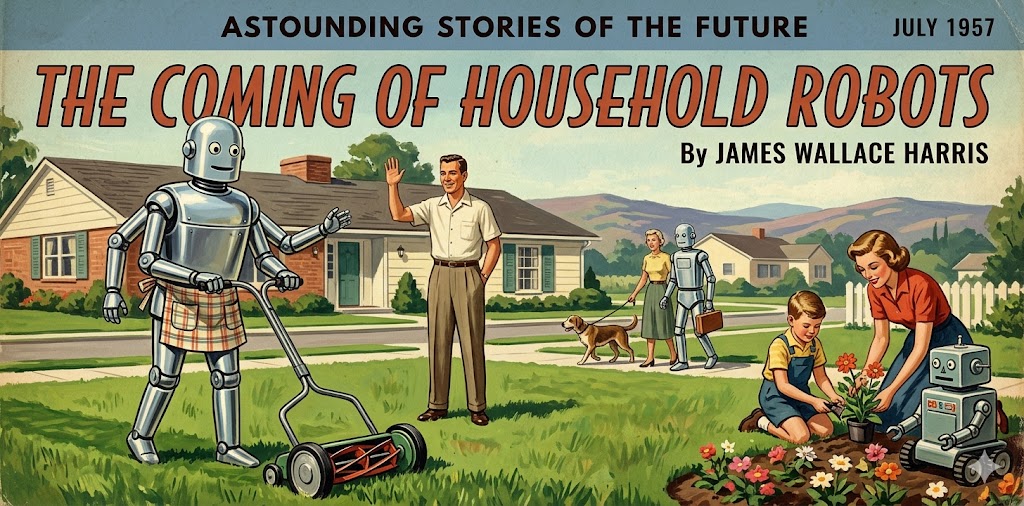

Will this technology disrupt society, blow up the economy, and derange human psychology? Can we integrate robots into our lives without destroying them? Science fiction has imagined possibilities since the 19th century, and fantasy, for even longer. Let’s examine some of the situations in which we might use a robot and extrapolate from that.

Already, hundreds of millions of people use AI today, so they can easily imagine conversations with an intelligent robot. And there are plenty of videos demonstrating the evolving physical abilities of robots. Recalling AI and robot progress from 2023 to 2026, it’s not difficult to imagine the progress this technology will achieve in the years 2026 to 2030.

The first thing we should do is visualize the robot you will buy. How tall do you want it to stand? Would a robot taller than you freak you out? Should the head have two eyes and a mouth, or would you be comfortable with a head with six eyes and no visible mouth? Should the body be humanoid? If so, should it wear clothes? If not, are there forms better suited for maximal utility? Do you want your robot to sit on the couch with you, or would you prefer it to stand?

Your wants will decide these choices. If you picture your robot kicking back in a La-Z-Boy and watching television with you, you’ll probably want it to be humanoid. If you buy a robot just for housework, yardwork, and home healthcare, you might purchase a robot that’s shaped easily to do the most chores. Right now, we buy robots to do individual tasks like vacuuming floors or mowing the lawn. But ultimately, wouldn’t it be more practical to have one robot that does everything rather than dozens of robots that do one thing?

Because so many millions use AI for conversation, I will assume faces will be important. Roboticists have experimented with giving robots facial expressions. And I’ve noticed that some robots in movies have body language, like C-3PO. Robots might not need to look very human to feel human.

I’ve been asking my friends about robots and their uses. One woman said she’s like a robot to share all her favorite activities and hobbies. She also said she sometimes wanted another husband, but ultimately decided they were too much trouble. Does that suggest we’re finding other people too much trouble, and we’d prefer machines?

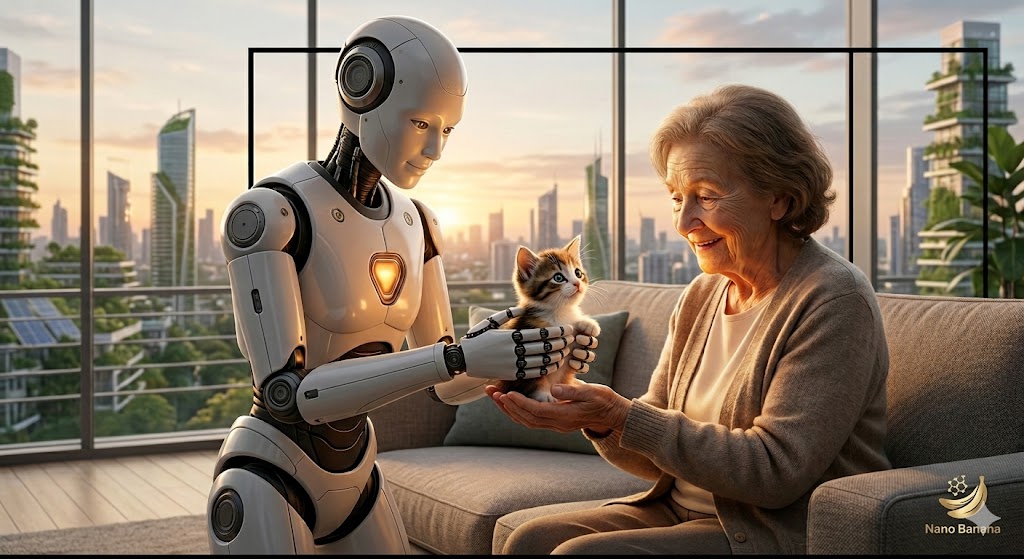

Since most of my friends are around my age, their answers were much like my reasons. My wife can’t do much physically at all, and I’m getting less and less capable. I’d want a robot with the strength and stamina I had in my twenties to help me work around the house and in the yard. And as Susan and I got older, I’d want robots to be our live-in caretakers.

However, what if everyone did this? How many people would become unemployed? What happens to maids, gardeners, handymen, painters, car detailers, healthcare workers, and the other people we pay to come to our house? What if general-purpose household robots were also skilled at electrical work, plumbing, and maintaining HVACs?

If businesses replace white-collar workers with robots, and manufacturers replace factory workers with robots, and store owners replace retail workers with robots, what happens to the economy?

Some people worry that AI will become super-intelligent and want to wipe out humanity. That’s rather science fictional. But capitalists replacing labor with robots is all too real. Things are so complicated. How many people really want to wipe old people’s butts? Wouldn’t the wipers and the people being wiped prefer robots to have that job?

What jobs do humans want to keep, and which ones would they want to give to robots? And if you had no income, which jobs would you be willing to take?

I see owning a robot in old age as a prosthetic for my weakening body. It’s not to put someone else out of work, but to let me keep working on my own. But what if I were younger, and considering a robot to do housework? Right now, housework is good exercise for my mind and body. I keep telling my wife she should do housework to keep her from becoming an invalid. I tell her she shouldn’t let me hog all the healthy benefits of housework. She doesn’t buy that.

Susan wants to hire a maid or cleaning service. Many of our friends have. I reply that as long as we’re strong enough to do housework ourselves, we should. But what if most people could afford robots to serve them? Would many people love living the upstairs lifestyle we see in Downton Abbey? Won’t that make us lazier? Will we become like astronauts, vigorously working out in the gym for two hours a day to make up for twenty-two hours of weightlessness?

Many people are questioning what social media, smartphones, and the Internet have done to society. Will AI and robots undermine human nature even more? It’s so hard to answer these questions. If millions of lonely people find comfort with AI and robots, is that bad? The obvious solution would be for half the lonely to meet up with the other half.

Since those people aren’t doing that, does that suggest that something else is wrong? Could it be that some people prefer machines to other people? If so, the market for robots will be tremendous. So, even if we think AI and robots are bad for society, businesses will sell them, and we’ll buy them.

I should be out working in my yard. It needs a lot of work. But I rate the creative activity of writing this blog higher, so I’m skipping yardwork this morning.

I can easily visualize a robot working outside, landscaping my yard, because of all the Ray Bradbury and Clifford Simak science fiction stories I’ve read. I don’t really like working in the yard, but I do wish it were nicer. I’d like to redesign my yard to maximize its benefits for insects, birds, and other wildlife. I wish my backyard were all wildflowers.

The idea of looking out the window that’s just behind my computer monitor and seeing a robot crafting a nature preserve for living creatures would be immensely pleasing. I can even imagine going for a walk in my neighborhood and seeing both people and robots working together and separately as I pass each yard. I even picture humans and robots walking dogs, stopping together to chat and let their pups sniff each other. In this daydream, I also see robots pushing old people in wheelchairs and babies in strollers. I also imagine coming home and finding Susan directing a robot to repaint the living room.

This is an idyllic fantasy. But is it one we really want?

JWH