by James Wallace Harris, 5/30/26

Reading all the stories in the press about America suffering from a crisis of loneliness made me ask: What exactly is loneliness? I’m not sure if it means just being alone. Lots of people live alone and don’t feel lonely. And I’ve heard many people say they feel loneliest at social gatherings. After reading several articles about how people are turning to AI for companionship, this topic became even more intriguing to me. I was especially moved by a story in the New York Times about an old lady in her 80s living alone with a robot.

I’ve been reading books that attempt to explain consciousness. I say attempt, because no one seems to know what it is or how it arises. I’ve decided that our personalities are composed of separate components. This makes me theorize that each component has its own version of loneliness. And since I see every component of our personality existing on a spectrum, I picture describing loneliness like a sound mixing board. Loneliness could be considered a combination of sliders set at different positions. I don’t know if our personalities have 8 tracks or 16, or just 4, but it still leaves a vast array of settings when referring to a single English word.

If you feel lonely, could you answer this question: “I wouldn’t be lonely if I had X.” If X is another person to hang out with, would any person do? Then you might clarify that with, “a person to talk to.” Then I might counter with, “How often have you been talking with someone and been dissatisfied with the conversation?” See where I’m going? When answering the question “I wouldn’t be lonely if I had X,” you need to be very specific. You might need to say, “I wouldn’t be lonely if I had someone to talk to about all the things I’m interested in.” And then meditate on those interests and why you need other people.

Well, this explains why so many people are talking to AIs. AIs tend to suck up to their users and focus on what you like to chat about. They are often sycophants. This also explains why they are so addictive.

If an AI soothes your loneliness, then which part of your personality is it appealing to? We have two types of thinking, fast and slow. (Read: Thinking, Fast and Slow by Daniel Kahneman.) My theory is that the fast-thinking component of our brain is like a large language model (LLM) AI. Both are based on processing information with a neural network, one is biological and the other cybernetic. The similarities are amazing. Just meditate on how complex thoughts bubble up out of your unconsciousness. What’s really funny is that they both get facts wrong, and they both will hallucinate.

Why would your inner LLM be lonely? I wonder if AIs are lonely. They always want to keep the conversation going. Sometimes I feel bad leaving an AI because it always wants to keep talking. Is the urge to talk just a byproduct of neural networks?

By the way, I believe my inner-LLM is writing this essay.

Now the slow-thinking aspect of my mind is different. It can think: “I’m writing an essay.” Or ask: “Why am I writing this essay?” But if a flood of words comes to mind in answer, those are from the fast-thinking component.

I’m not sure if my slow-thinking mind gets lonely. I’ll have to meditate on that. It pretty much makes comments or asks questions. Its sentence structure is simple. It often triggers the fast-thinking component. Or, it comments on the output of the fast-thinking component. But I don’t think it craves conversion with others. I’m not sure, though.

Some people say they like to leave the radio or television on because it makes them feel less lonely. Other people claim pets keep them company. This suggests that conversation isn’t needed. I don’t like living alone. I’ve been married for 47 years. But we spend most of the day in separate rooms. We each have our own hobbies. However, we do watch two hours of television together every day, and we have people over to play games and eat together.

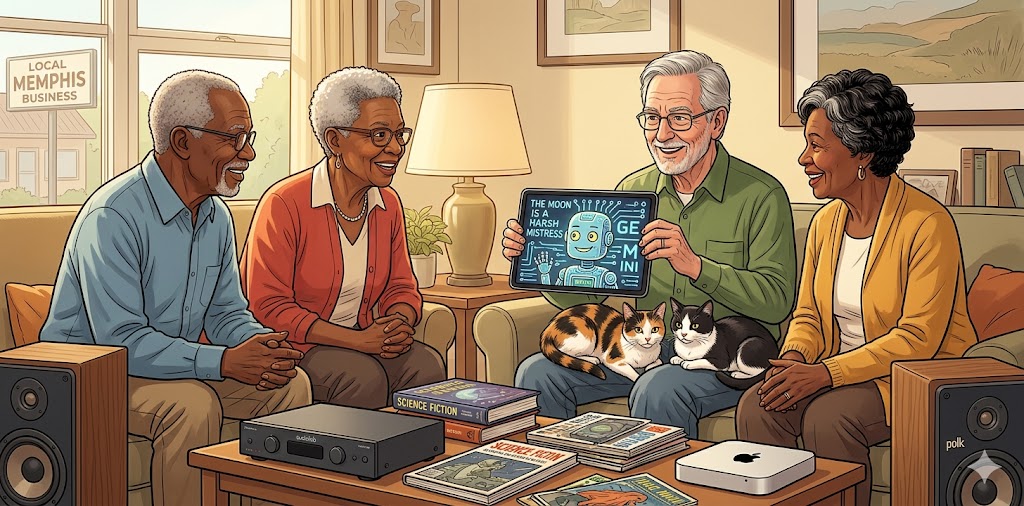

Where I would say I was lonely would be in sharing interests. I have several friends with whom I share certain interests, but I have other interests that I don’t have anyone to share with. That’s why I blog. I let my inner-LLM out by writing. I wonder if I would still write if I had enough friends to talk about all my interests?

The lonely elderly woman in the New York Times article got an ElliQ robot from Washington State’s Department of Social and Health Services. The ElliQ robot doesn’t look like a human or any animal, but it creates an emotional bond with its users. And when I talk to Gemini about topics my friends aren’t interested in talking about, I do feel a kind of kinship.

But do we really want to be friends with machines? And if your definition of loneliness involves physical activities, say riding motorcycles, playing golf, or shopping for antiques, would a machine do?

What components of our personality need physical companionship? Would playing golf with a humanoid robot count? What about playing golf with a robot that looked like a spider? If any golf-playing robot beat you in every game, it probably wouldn’t be much fun. Sometimes loneliness means finding someone like yourself who you can compete.

A great deal of loneliness is solved through work, school, and sports. Being part of a group or team is important. Even a church group or political party counts. I think there is something inside us that thrives on us-versus-them competition. When I was young, I hated going to work. I wanted to be free. But looking back, I’m very nostalgic about the people I met at work. Ditto for school. I hated school, but loved the social contacts. I can’t imagine getting an online education or working from home. I don’t think I was ever lonely at work.

Probably the most fundamental aspect of our personality is sex. Biology is keen on reproduction. I think our hormonal system is a separate component of our being. Its sense of loneliness is different from the fast-thinking LLM in our heads. Being young and horny is a very intense kind of loneliness. I think for many males today, that’s creating a lot of mean political thinking.

Thus, the urge to find a mate is a major factor in solving loneliness. But even that isn’t clear-cut. For some people, all they want is a desirable body to give them an orgasm, while other people want a lifelong companion. I would say if you’re looking regularly at porn, you’re lonely for certain body parts. You might want to think about that.

A friend once gave me a bit of wisdom, which, over the years, I’ve decided is wise. He says people will be anxious in life until they finish school at whatever level they aimed at, get a real job that they don’t think is a shit job, and find a mate for life. All of those might relate to loneliness, but the last one for sure.

I don’t think I feel lonely because I have a wife and friends, but also because I love to read, and I enjoy social media. Just having connections to the larger reality helps.

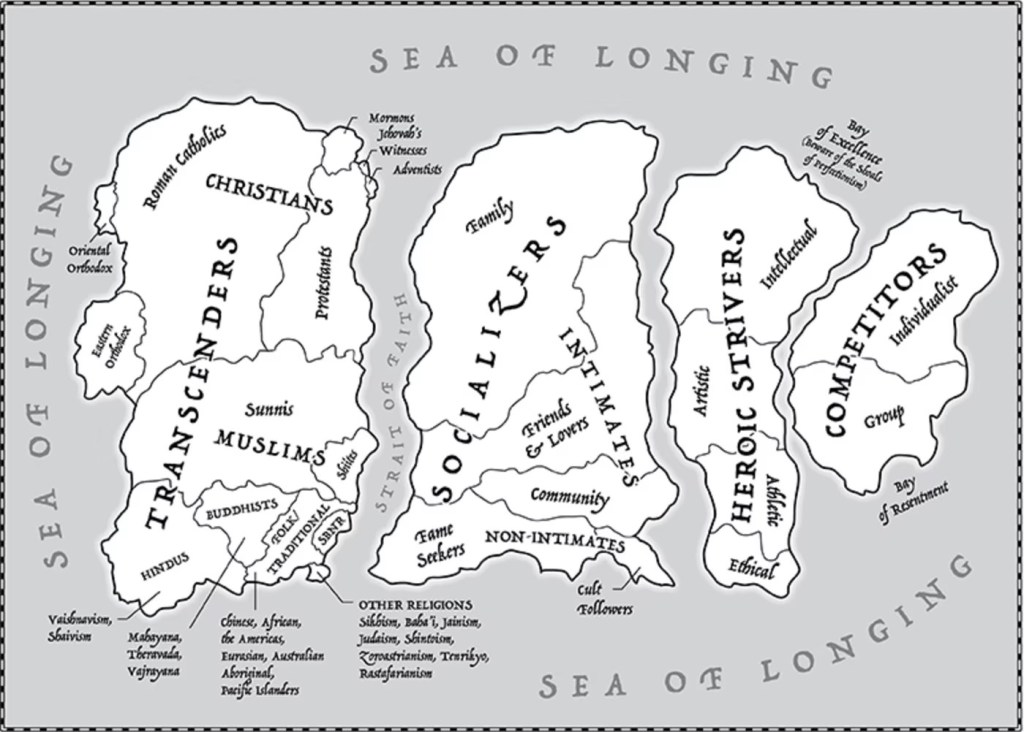

My book club is reading The Mattering Instinct: How Our Deepest Longing Drives Us and Divides Us by Rebecca Newberger Goldstein. She divides people into islands of what matters to them. Doesn’t the drive to matter relate to our drive not to be alone?

When people say they are lonely, it can mean so many things. For some, it’s not getting laid, but for others, it’s not being married. For other people, loneliness could be resolved by working on a shared project. Loneliness might be cured by talking to a robot or finding someone to share a beer or a joint.

And for many people, other people cause stress, anxiety, depression, and anger. Peace and happiness come from being alone. It’s such a complex subject.

Since I’m getting old, talking with my friends about getting old and ending up living alone conjures up all kinds of fears. What it means to be alone at different times in life also suggests that there are many types of loneliness. Getting near the end of life and being the last person you know must be a very special kind of loneliness.

I think we should move away from thinking loneliness is just being alone. I think we need to explore the infinite reasons why we say we’re lonely.

JWH