by James Wallace Harris, 6/8/26

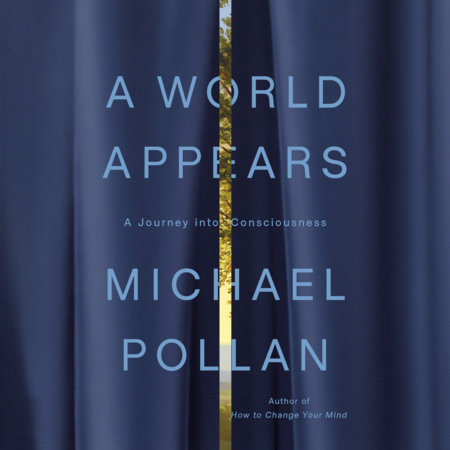

In the first chapter of Michael Pollan’s new book, A World Appears, he makes a good case that consciousness evolved alongside biology, probably beginning in cells. For most of history, humans separated themselves from the animal world by claiming we had a soul, a divine spark, that animals lacked. Scientific studies of the mind are showing that’s not true.

Pollan found scientists who showed that plants could form and retain memories, anticipate, decide, and act on their goals. Their tiny bit of consciousness is nothing like ours, but it shows that consciousness is on a spectrum. There are profound implications if consciousness coevolved with biology, from the virus to the human brain. Research into consciousness and artificial intelligence is revealing a flood of new insights.

If life itself represents a spectrum of conscious development, I’m also assuming that any individual animal also shows a spectrum of conscious development over its lifetime.

I’m saying who I was at five is far different from who I am at seventy-four. The difference won’t be as big as between me and my cat, Ozzy, but I believe it’s huge. I’m not sure, but in some ways, five-year-old Ozzy might have been more aware than 5-year-old Jimmy. If we were both abandoned in the woods, Ozzy would have far better survival awareness.

I also believe my awareness/consciousness evolved significantly from 5 years 0 months to 5 years 12 months. I started the 1st grade when I was 5 years and 8 months old. School accelerated the evolution of my conscious mind.

I only have fleeting memories of life before turning five. I can remember only a few interactions with people. I have a few memories from Kindergarten. I had no knowledge of numbers, words, or letters. I had extremely limited spatial and temporal awareness.

I did what I was told, but I would have preferred to play on my own. I loved my toy truck, a little metal front-loader. I loved to climb trees. I loved TV, but we’re talking Captain Kangaroo and Kukla, Fran and Ollie. I had no idea what books and magazines were. I had no idea where I lived.

I’m not sure I had any particular self-awareness. Looking back, I think I was mostly unconscious of reality. If a stranger had walked up to me and pointed a gun at my head, I don’t think I would have been frightened or even known what a gun was. If my parents had left me alone in the house, I’m not sure I could have gotten my own food. I knew my father, mother, sister, and grandmother, but I was indifferent or clueless about everyone else. I don’t remember my Kindergarten teacher or any of my classmates.

My friend Linda has told many stories about her childhood, and she was far more aware than I was at the same ages. Girls mature sooner, but I think Linda was even exceptional for being a girl.

My point is, everyone evolves differently. The question is, how broad is the range of consciousness in humans? We know the range of intelligence is great, but what about awareness of reality? How many people are closer to a collie dog than to Einstein?

Nor is consciousness one thing. Elon Musk might be at the height of money-making consciousness, but fairly low at empathy for people.

Looking back at Jimmy at 5, I feel he was essentially unconscious compared to Jim at 74. I also sense that there are countless areas where I’m essentially unconscious at 74 compared to others at any age.

We say humans have five senses: sight, hearing, touch, taste, and smell. Well, what lets me feel my heartbeat? What sense organ lets me feel cold? What sense organ lets me discern the passing of time? What sense organ does my wife have that lets her remember melodies that I lack?

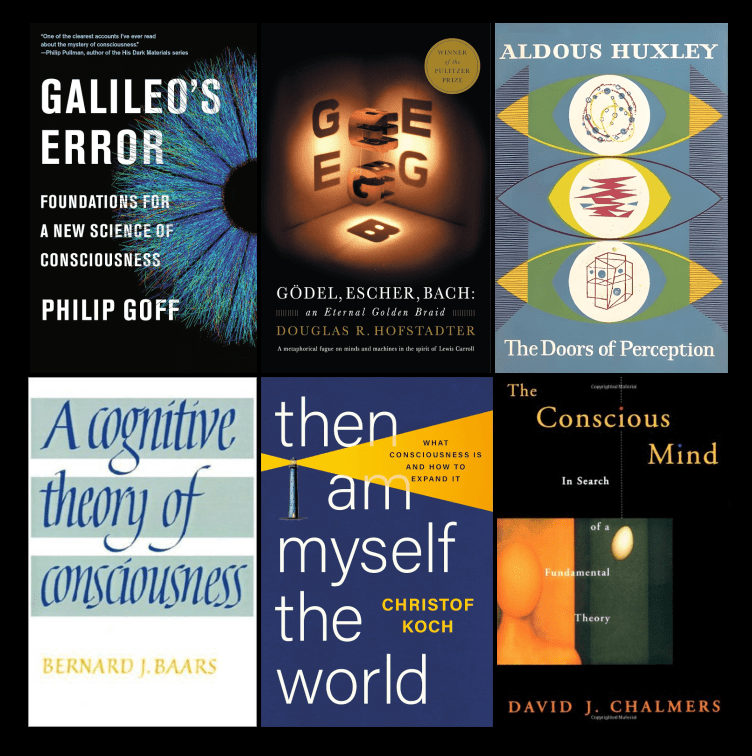

How many levels of awareness are humans capable of achieving? How many aspects of reality can we discern? Science fiction, mysticism, spiritualists, and users of psychedelic drugs claim there are many levels of higher consciousness we could attain if we tried. Most sound like fantasies, but what if some are possible?

Over the centuries, there have been stories about supermen. Often, they have psychic abilities and other superpowers. Just consider Greek myths and Marvel Comics.

What are some truly possible higher states of consciousness? Compared to Jimmy at 5, I have a higher consciousness. What levels could I have achieved if I had known they existed and I had tried to attain them?

Artificial intelligence is a big topic right now. Computer scientists want to create AIs that are smarter than people. But what other things might AI become aware of that we can’t perceive?

We only perceive in a limited range of the electromagnetic spectrum. AIs could be built to perceive far more of the whole spectrum. What if they discover things we never could with our senses and even scientific instruments? Will we believe them?

If I could travel back in time to talk to Jimmy at 5, could I make him understand anything about being Jim at 74? I doubt it. He could not even conceive the concepts of time travel, aging, or growth.

In recent months, I’ve been contemplating the evolution of my own consciousness. I think that evolution accelerated between 5 and 12. But then it exploded around 13. Most conceptual expansions peaked in my twenties. And most of my conceptual abilities have been declining since then. However, I feel my ability to generalize is still growing. That might be wisdom, or it might be a delusion.

The more I study my own mind and read books by scientists who study minds in general, the more I’m convinced that our consciousness coevolved with physics, chemistry, and biology. Thus, when my physical body dissolves with entropy, so will my mind.

There are even some scientists who still hold out that part of our awareness is metaphysical, and it will survive physical existence. Those scientists say artificial intelligence will never have that metaphysical spark they call the soul. I wonder something different.

What if the total gestalt of body, mind, awareness, sentience, and consciousness can be called our soul? But what if that soul is mortal? That collectively, all life on Earth has a kind of soul. too, but also mortal. Obviously, all life is evolving. But how much can we evolve as individuals?

Life and evolution are anti-entropic. Death is entropy. Is there a metaphysical existence that is not entropic?

JWH