by James Wallace Harris, 4/11/26

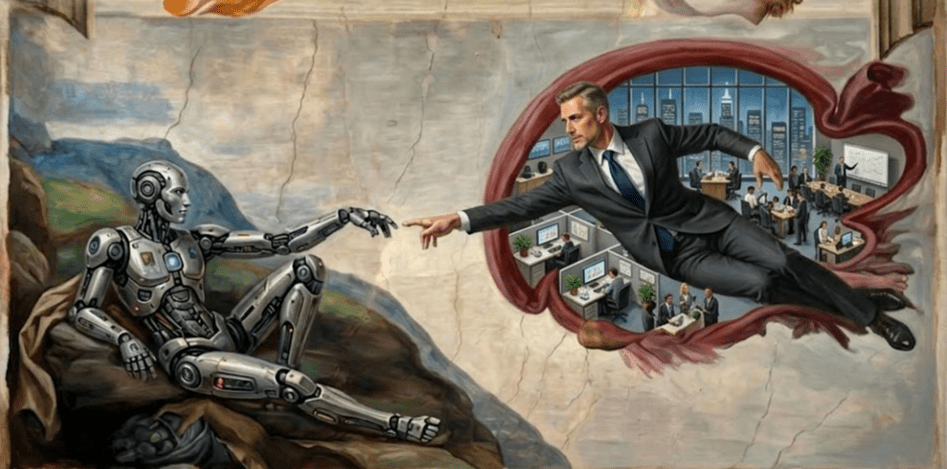

Current research into the human brain, sentience, and artificial intelligence reveals that we are not who we think we are. How our conscious self-aware minds emerge from biology, chemistry, and physics still baffles scientists. After working with AIs, part of me believes that Large Language Models (LLMs) mimic parts of my own mind, the parts that generate language and thoughts. It makes me ask: “Where did ‘I’ come from?”

“Where did I come from?” is a common question asked by young children. Unfortunately, the answer they often get is ontological claptrap about God. This brainwashes most individuals for life. All too often, parents make shit up like a hallucinating LLM, or they tell their kids what they were told as children. How many parents are honest enough to say, “We don’t know.”

We could tell little ones that science can explain the physical world, but the physical world arises from the quantum world. We don’t understand that domain very well. We can confidently say life rose out of the physical world, and we understand that process to a degree if you study physics, inorganic chemistry, organic chemistry, microbiology, botany, and biology. Awareness and life rise out of biology, but we don’t understand that very well at all. We could also say that we speculate about things smaller than the quantum domain and larger than the cosmological domain, but it’s only theory. As far as we can tell, there’s always something smaller and larger, and existence might be infinite and eternal.

Another honest answer might be, “We’re going to send you to school for sixteen years,” and when you’re finished, you still won’t know.

Of course, the previous answers depend on what your kid meant by “Where do I come from?” That question might have been inspired by one of their friends being told they came from Cleveland, and your kid only wanted to know where they were born. Or your kid might be bright enough to realize cause and effect, and wonder where everything comes from.

What if we take the question to mean exactly “Where do ‘I’ come from?” Isn’t it true that we’re all islands of consciousness floating in an infinite sea of reality? If your kid is a child prodigy, it may have arrived at its own “I think, therefore I am” experience.

An existentially interesting answer might be, “Your brain has several tools for understanding reality. They are senses, memory, language, logic, and emotions. If you study how they work and their limitations, you’ll eventually be able to understand how your sense of “I” came into being as a self-aware entity. You could even get a bit Zen on them and say, “When you figure out who or what is saying ‘I’ or ‘me’ in your mind, you’ll know.”

I’ve been contemplating the nature of my mind because of the recent AI craze. Mainly, I’m trying to distinguish which part is what I call me. When I meditate, I can watch my thoughts appear out of nowhere. I see my thoughts separate from my sense of just being aware. Does that mean my thoughts aren’t mine?

Twice in my life, I have lost words and language. The first time was when I took a large dose of LSD. The second time was when I experienced a TIA. In each case, words just weren’t there. I just observed. I moved around and interacted with my environment, but I had no thoughts telling me what was happening. By the way, during those moments, I had no sense of “I” or self.

When I had the TIA, it was in the middle of the night. I woke because of a bright flash of light, like a lightning flash in my dream. I looked at my wife, but I didn’t know her name or how to talk to her. Instinctively, I went into the bathroom, shut the door, turned on the light, and sat on the commode. I just stared at my surroundings. After a lapse of time, which I can’t say for how long, the alphabet came to mind – and internally said to myself, A, B, C, etc. Then words like “towel” and “door” came to mind. Eventually, thoughts returned, and I felt normal. I went back to bed.

Later on, I wonder if the state of being without words was like how an animal perceives the world? This experience showed me that who I was wasn’t my thoughts. However, my mind is always active, generating thoughts. Where do they come from? It’s very hard to separate the being who observes and the being who thinks.

If I relax, I can turn off my thoughts for short periods. It’s somewhat like holding my breath; eventually, I have to let go, and my thoughts rush back. Sometimes I feel whatever generates my thoughts is like an LLM. But what prompts it to generate words?

When a thought asks: “What creates a thought?” Is another brain function asking my internal LLM a question? Or, is it my LLM talking to itself? Or can my observing self ask questions? Yesterday, when this conundrum appeared in my mind, the question “What is air?” popped into my mind. After that, words like nitrogen, oxygen, and molecules began bubbling to the surface.

Thinking about thinking is a kind of metacognition. While meditating, I can distinguish two types of thinking. There is a slow thinker who uses few words, who can analyze what I’m experiencing. And there is a computer-fast thinker that shoots out words, ideas, and concepts far faster than I can consciously control. Reading Thinking, Fast and Slow by Daniel Kahneman confirms this observation. Is my “I” the slow thinker?

I don’t feel the observer has any control or input over thinking. I don’t think the observer that exists without language is my sense of I-ness.

When I’m on the phone with a friend, who is talking? The inner LLM, or the slow thinker, which I call the Analyzer? I think the Analyzer triggers whole streams of words from my brain’s LLM that come far faster than my Analyzer thinks.

This just occurred to me. Lately, my friends and I have often struggled to remember a word. Is the LLM forgetting, or the Analyzer? I know I could fall into dementia or have a major stroke and lose all ability to use language, yet I could continue to live for years. Can a stroke erase the analyzer? I think the TIA and LSD did temporarily.

In his book, The Mind is Flat, Nick Chater makes a case that our unconscious mind isn’t a deep, complex part of our being. He calls it flat and describes it as working like an LLM. He recounts case studies that suggest the unconscious mind is far simpler than psychologists have imagined.

Our thinking mind depends on the data it was trained on, which is another way of saying our thinking mind is like an LLM. If all a mind consumes is radical theology, then radical theology is all it knows. Does it really know anything? Or is it just regenerating ideas like an LLM?

Chater says our beliefs are illusions. Even before I read Chater, I had decided beliefs were delusions. If beliefs are a waste of time, what use is our thinking? We want to understand reality. We want to communicate with other people. But don’t words ultimately fail us?

Who is writing this essay? The Jim LLM, or the Jim Analyzer, or the Jim Observer?

As of now, I believe my I-ness comes from the Analyzer. I’m not positive. I know it can be destroyed like my memory, or my LLM thought processor.

Does the observer only observe, or does it learn? Does it become a keener observer? Or does the LLM become smarter, and the observer just follows along like the wake of a boat? This line of thought makes me want to reread Allan Watts books on Zen Buddhism and Jerzy Kosinski’s novel Being There.

What we know appears to come from our built-in LLM. It’s trained by life experiences, education, and sharing opinions. But how much does the Analyzer know? It can call bullshit on thoughts the LLM generates, but does it know anything? I feel our sense of ethics or morality belongs with the Analyzer. However, I don’t think it’s a deep thinker. It feels like it works on intuition. That suggests another processor working deep in the brain – a Large Emotion Model. Since I believe emotions come from biological processes, I can’t imagine AIs having LEMs.

To answer: Where Do “I” Come From, the answer appears to be the Analyzer. If that’s true, when does it emerge in our development? Is it the processor in our brains that develops wisdom? If it is, then it learns slowly. Does this process develop in artificial intelligence?

If drugs, disease, health, and injury can hurt all these components of my personality, does anything survive death? Is there another component we call the soul? I have never been able to distinguish it in my meditations. And what about that aspect we call personality?

I have been reading many articles about people befriending AIs and even claiming to be in love with them. That suggests people react to the LLM function in others, whether AI or human. Is that our true personality?

I tend to believe that who we are is a gestalt of all our components. However, in this day and age, we emphasize the LLM features. And whether human or AI, that feature is only as good as its training.

Here is a Zen Koan for you. If a person or AI is racist, is it because of who they are or their training? Are we really the ideas we express?

JWH