By James Wallace Harris, 5/26/26

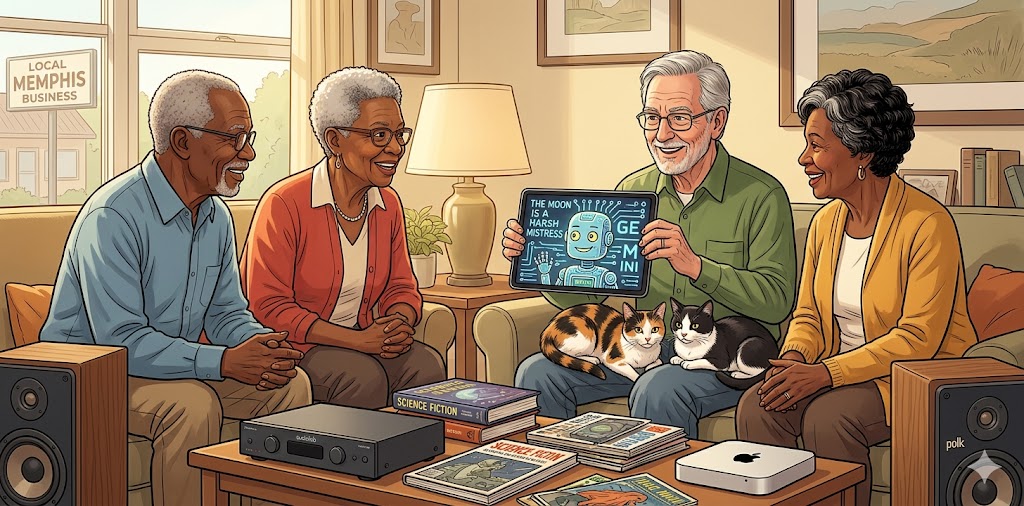

Last Thursday, Bookbub offered me a $1.99 deal on Mrs. Palfrey at the Claremont by Elizabeth Taylor. That deal is no longer available, and the Kindle edition is now $5.99. However, my edition is an NRRB edition, and the current one is from Virago. They each have a different introduction. That makes me wonder about Bookbub deals. Well, that’s neither here nor there. Mrs. Palfrey at the Claremont is a wonderful novel.

I bought the book and started reading it right away because it was about an elderly Englishwoman moving into a hotel that catered to retirees. For some reason, I’ve always liked movies, TV shows, and books where people live in a rooming house or hotel.

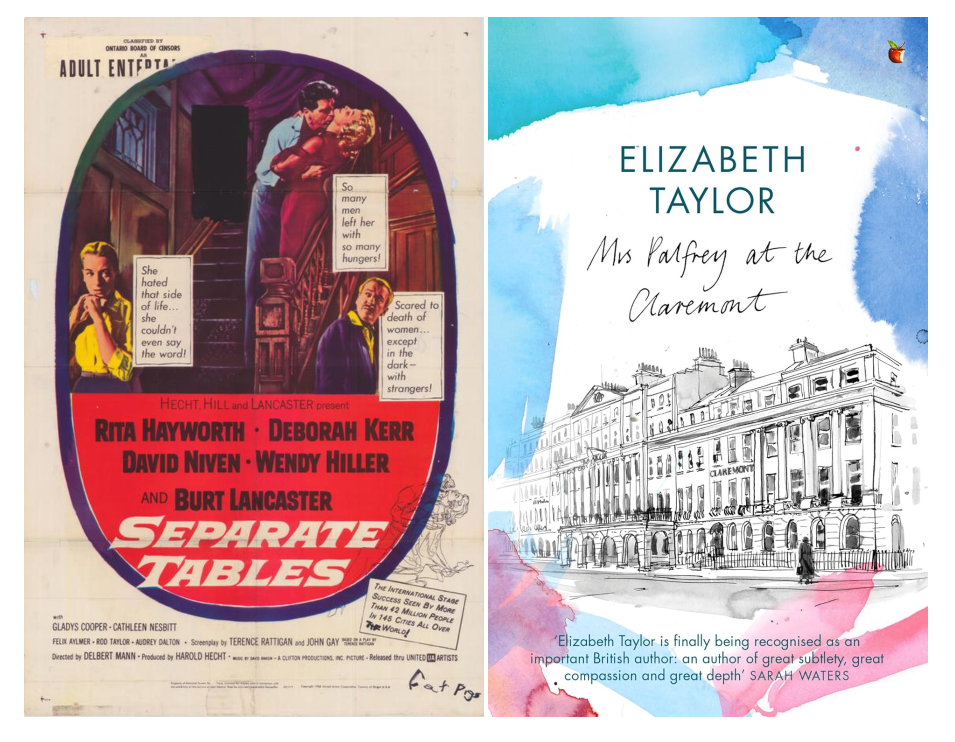

But here’s the coincidence I wanted to mention. Thursday night, YouTube offered me Separate Tables, a film about people living at an English residential hotel. You have to wonder just how savvy these AI algorithms are at knowing what we like.

I’ve since discovered there’s a film version of Mrs. Palfrey at the Claremont, also available to watch for free on YouTube. It would have been even eerier if the algorithm had offered me this film last Thursday. And one reason I’m reviewing these two stories together is that the people in the Claremont also ate at separate tables. Both stories are about loneliness. Mrs. Palefrey focuses on older people, and Separate Tables covers everyone.

Mrs. Palfrey at the Claremont, the novel, is a gentle tale about unhappiness that makes you feel good. Laura Palfrey is a widow with one estranged daughter and one indifferent grandson.

Elizabeth Taylor describes her: “She was a tall woman with big bones and a noble face, dark eyebrows and a neatly folded jowl. She would have made a distinguished-looking man and, sometimes, wearing evening dress, looked as Lord Louis Mountbatten might in drag.”

The plot of this novel is rather simple. Mrs. Palfrey is embarrassed that her grandson doesn’t come to visit. She befriends a starving would-be writer, Ludo Myers, who actually likes her. So she tells the other hotel guests that Ludo is her grandson. Keeping up this lie leads to light, pleasant humor. Along the way, we get to know the other elderly guests at the Claremont.

Only one old man, Mr. Osmond, lives among the old women. I sometimes go places where I’m the only old guy among a roomful of old women. So I identified with Mr. Osmond.

Separate Tables is a far more dramatic, edgier tale, on the verge of melodrama. The film is based on two one-act plays, and the story is told through an ensemble cast. David Niven won a Best Actor Oscar, even though his time on screen was short. In all, the film was nominated for seven Oscars, including Best Picture, and won two. The other was for Wendy Hiller for Best Supporting Actress.

Sibyl Railton-Bell (Deborah Kerr) is a homely spinster dominated by her mother, Mrs. Maud Railton-Bell (Gladys Cooper). Sibyl is emotionally fragile and is afraid of sex, yet she is attracted to Major David Angus Pollock (David Niven). Pollock is a lonely old man who pretends to be a war hero and has several dark secrets. John Malcolm (Burt Lancaster) is an American hiding out from life at the hotel. He has a tortured soul, drinks, but attracts the hotel manager, Pat Cooper (Wendy Hiller). They talk of getting married. Then Anne Shankland (Rita Hayworth) shows up. She’s an actress who has gotten too old and can no longer dominate men or get parts.

All the actors play against their famous type.

Separate Tables is gorgeous to look at, filmed in widescreen black-and-white. The story flows from one character to the next, bringing all the guests together into a scene I particularly liked. It’s near the end when they are all seated at separate tables, but talking to each other between tables.

Both stories, Between Tables and Mrs. Palfrey at the Claremont, are about loneliness. I’ve known several people who have said they were loneliest at parties, when they were around people. The characters in these two stories are frequently together. The virtue of Elizabeth Taylor’s novel is that the reader hears what’s going on in people’s heads. In contrast to the film, viewers must read the actors’ minds through facial expressions and body language.

I have this odd feeling I’ve seen Separate Tables before, but I have no real memory of it, just a bit of déjà vu. Getting old is a trip. I know I’m trying not to talk about it because it depresses my friends, but I find the experience fascinating, even all the forgetting.

I’ve always assumed that if my wife dies first, I’ll move into some kind of retirement community or assisted living. Most people I know dread the thought of such a lifestyle. I like the idea of having my own room for solitude, but being able to go downstairs and find a community. And I know as I get older and weaker, I’ll be glad to give up working in the yard, taking care of the house, and driving. I think such laziness is why I’m drawn to stories about people living on hotels and rooming houses.

I’m guessing that many of my friends will find these two stories disturbing. My peers hate anything that makes them think about aging. I guess I’m some kind of existential Boy Scout, because I consider such stories to be preparing me for the future.

By the way, the introduction to Mrs. Palfrey at the Claremont in the current edition is by Paul Bailey, who claims to have been Taylor’s inspiration for Ludo. You can read the sample at Amazon to read the introduction, but here’s the part I liked.

I have to begin this appreciation of Elizabeth Taylor’s penultimate novel on a personal note. I was working as an assistant in Harrods when my first book, At the Jerusalem, was published in 1967 – a fact which, for some reason, struck the diarist of The Times as being of interest to the paper’s readers. A year after publication, I met Elizabeth Taylor at a party. She told me how intrigued she had been that a man in his late twenties should have chosen a home for old women as the setting for a novel, and that she had gone to Harrods’ magazine department to see what such a curious creature looked like. She went on to say that she had watched me at work for about an hour, from the vantage of a chair in the adjoining lounge. She smiled as she made this revelation. She had not anticipated seeing someone with a youthful appearance: she had expected me to be just a trifle wizened.

When I read Mrs Palfrey at the Claremont in 1971, I remembered Elizabeth Taylor’s confession. Ludovic Myers, the young man who comes to Mrs Palfrey’s aid when she falls in the street and who subsequently befriends her, is a novelist manqué. Throughout the course of Mrs Palfrey, he is writing a study of old age which – altering a phrase uttered over a pre-dinner sherry by his new, elderly friend – he gives the title They Weren’t Allowed to Die There. He works at Harrods, in the sense that he takes his manuscript into the (now vanished) banking hall, where he scribbles away happily, surrounded by Honourables on their uppers and tired county ladies resting their feet before the train journey back to the shires. Ludo, like me, is an ex-actor, who has done his stint in a tatty repertory company and has no desire to repeat the experience. In other words, I am flattered to think that I gave Elizabeth Taylor a little bit of inspiration for what is undoubtedly one of her finest books.

Taylor, Elizabeth (2011). Mrs Palfrey At The Claremont: A Virago Modern Classic (Virago Modern Classics Book 83) . Little, Brown Book Group. Kindle Edition.

JWH