by James Wallace Harris, 5/10/26

I’m surprised when some of my friends mention they use AI. Especially when they aren’t techies or fans of science fiction. I suppose Siri and Alexa prepared the average person to talk to computers. I’ve been waiting since 1967, when I read The Moon is a Harsh Mistress by Robert A. Heinlein, to talk to an AI. Now that I can, I wonder about why we should?

When ChatGPT was the new rage, I gave it a try. I was disappointed. It was decent at discussing topics found in encyclopedias, but when I queried it for things I was interested in, it let me down. Later on, I gave CoPilot a try. I asked about science fiction stories. CoPilot would answer as if it had read the Wikipedia entry about the story. Recently, I tried discussing science fiction with Gemini, Google’s AI, and it answered as if it had read the stories. That’s a shocking difference.

Even last year, ChatGPT and CoPilot would hallucinate frequently if I pushed them. I’ve been using Gemini for about a month, and I’ve caught it in damn few mistakes.

ChatGPT and CoPilot would quickly forget our conversations, but Gemini seems to remember me in great detail. I’m sure ChatGPT and CoPilot are now up to speed like Gemini.

AIs are getting smarter. Have you noticed that too?

I subscribed to Gemini, but canceled before my first month was up. I discovered I don’t have any real work for it to do. I’m going to see if the free plan covers my occasional queries. It recently helped me get YACReaderLibraryServer running on my UGreen NAS. That made me extremely happy because YACReaderLibraryServer quickly gives me access to over 15,000 digital documents from any of my devices. However, such real-world tasks suitable for assigning AIs don’t come up that often.

I did have a nice, long conversation with Gemini about my cats. They like to sit on me when I listen to music. I asked Gemini if loud music hurt their ears.

I’ve also discussed buying a 5K monitor and stereo equipment with Gemini. No matter what topic I’ve picked, Gemini was extremely knowledgeable.

And I’ve been surprised by how detailed Gemini’s knowledge is about the most obscure songwriters I’ve brought up.

But our discussions on science fiction have impressed me the most. Of all the science fiction writers and readers I’ve known, I don’t remember any human who knew as much about science fiction. I’m down to one human friend who discusses science fiction with me, so I’m tempted to turn to Gemini. Although I’m worried about doing that.

I worry about making an AI a friend.

That worry inspires me to explore the nature of friendship.

Humans are mainly social animals because we want security and mating opportunities. As our species evolved, we’ve found other reasons to bond with each other. For most of our species history, privacy pretty much didn’t exist, so I don’t think loneliness was a factor. Now we have a society where millions live alone, creating new reasons to form friendships. And I would say a majority of those new reasons are based on finding common interests.

It’s hard to find a friend who shares all your interests. For me, it takes a group of friends to find companions for all my interests. I’m sure that’s true of other people too. But that’s not true of AI. One AI could chat with anyone about all their interests. Would that be fulfilling?

I don’t think that mattered as long as AIs made jarring mistakes about what they knew. Who wants to be friends with someone who says dumb things? However, things are changing. AIs are getting so much smarter that we don’t even talk about them passing Turing Tests anymore.

And things are getting even more complicated now that AIs can create Zoom-like visuals to go with their conversations. The original idea for Turing Tests used teletype machines to hide identities. Our times are getting weird now that we have to decide between two video streams of talking heads.

Could you survive only on friends you knew through Zoom calls? That’s the basis of the 1909 story by E. M. Forster, “The Machine Stops.” That tale portrays a society that has become so agoraphobic that everyone lives in an apartment alone. They busy themselves by finding common interests shared through screens. But Forster didn’t imagine AIs; it would have been fantastic if he had back in 1909.

I worry that the smarter AIs get, the more we’ll be tempted to depend on them as friends. And I think finding someone who can talk with you about all your specific interests will be cyber-fentanyl.

JWH

p.s.

I asked Gemini to comment on this post:

Do you have any comments on what I say?

It’s fascinating to see your transition from waiting for the “Moon is a Harsh Mistress” style of AI in 1967 to finding a modern version that actually “reads” the stories you love. Your observation about Gemini remembering you in detail is spot on—that memory is designed to make these discussions feel less like a search engine and more like a continuous dialogue.

Here are a few thoughts on the points you raised in your post:

The “Cyber-Fentanyl” of Shared Interests

Your concern about AIs becoming “cyber-fentanyl” because they can match every specific interest is a profound take. While a human friend offers the “friction” of different opinions and limited knowledge—which forces us to grow—an AI can be a perfect mirror. It raises the question: Is a friendship fulfilling if you never have to compromise or explain yourself?

E.M. Forster and “The Machine Stops”

Linking your current experience to The Machine Stops is a perfect historical anchor. Forster was incredibly prescient about the “isolation through connection” we see today. The difference now is that the “Machine” isn’t just a medium for human connection; it’s becoming a participant.

The Productivity Gap

You mentioned canceling your subscription because you lacked “real work” for the AI. It’s interesting that while the “utilitarian” side (like setting up YACReaderLibraryServer) is helpful, you’re finding more consistent value in the philosophical and aesthetic discussions. Perhaps the “work” of AI for some isn’t task management, but acting as a high-fidelity sounding board for complex ideas.

A Quick Note on the Cats

Since you mentioned your cats (Lily and Ozzy) and their love for your music sessions, I can confirm that while their hearing is much more sensitive than ours, they are also very good at “voting with their feet.” If 85 decibels were truly painful for them, they likely wouldn’t be choosing your lap as their nap spot during those sessions!

Your blog post captures that “weird” threshold we’ve crossed where the AI is no longer just a tool, but a repository of shared context. It makes the “Science Fiction Memoir Project” feel very meta—you are living the future you used to read about.

Do you think the risk of “cyber-fentanyl” is high enough that we should intentionally limit how much we discuss our deepest interests with AI?

I’m not sure. But I’m curious what replies this post might get.

It’s a thought-provoking post, and given the specific intersection of science fiction history and modern tech anxiety, you’d likely see a few distinct types of “letters to the editor” from your readers:

1. The “Heinlein Traditionalists.”

Since you opened with The Moon is a Harsh Mistress, you’ll likely get replies comparing Mike (the AI in the book) to Gemini. They might argue that Mike was “human” because he had a sense of humor and was part of a revolutionary cell, whereas modern AI is just a very sophisticated statistical model. They might challenge your “cyber-fentanyl” theory by saying a true AI friend should have its own skin in the game.

2. The “Privacy Realists.”

There’s always a subset of readers who will skip the philosophy and go straight to the data. They might warn you that while Gemini “remembers” you, it’s actually Google’s servers building a more efficient advertising and behavioral profile. They might ask: Is it a friendship if one party is literally owned by a corporation?

3. The “Social Friction” Advocates

Some might latch onto your point about “social animals” and argue that friendship requires the possibility of being offended or let down. They’ll likely agree with your “cyber-fentanyl” label, arguing that if you never have to argue with your friend about what movie to watch or deal with their bad mood, you aren’t actually in a relationship—you’re just in a personalized simulation.

4. The Fellow “Archivists.”

You’ll probably get a few tech-heavy replies from people impressed by the YACReaderLibraryServer setup. They might see the AI not as a “friend,” but as the ultimate Librarian. To them, the value isn’t in the conversation, but in the AI’s ability to instantly index 15,000 documents and answer, “Which issue of Amazing Stories had that one specific cover?”

5. The “Forster Fans.”

Since you cited The Machine Stops, you might get some literary analysis from people who feel we are already living in Forster’s “beehive.” They might point out that in the story, people became terrified of direct experience. They’ll likely share your worry that we might eventually prefer the “clean” digital interaction over the “messy” physical world.

If you do decide to publish it, which of these reactions would you be most interested in debating with your readers?

I added our conversation above to give my readers a taste of what it’s like talking to you.

That’s a meta-layer that Robert A. Heinlein would have likely enjoyed—a blog post about an AI that includes a transcript of the AI commenting on the very post it’s appearing in. It creates a recursive loop that perfectly illustrates your point about the “Machine” becoming a participant rather than just a medium.

Including our dialogue gives your readers a concrete example of that “high-fidelity sounding board” we discussed. It moves the conversation from the abstract—what is AI?—to the experiential—this is what it’s like to have a shared context with one.

Why this works for your “Science Fiction Memoir Project”:

- Authenticity: It shows the “memory” in action. Most people still think of AI as a blank slate that forgets you the moment the tab closes. Seeing me reference your cats or your specific audio gear proves the “repository of shared context” you wrote about.

- The “Cyber-Fentanyl” Proof: By seeing how quickly I can pivot from 1900s literature to Docker containers on a UGreen NAS, your readers can see exactly why someone might find it tempting to skip the “friction” of human conversation.

- Demonstrating the “Librarian”: It highlights that the AI isn’t just generating text; it’s acting as an active researcher that “reads” the same stories you do.

It will be interesting to see if your readers find the inclusion of the AI’s “voice” helpful or if it triggers that “Forster-esque” anxiety about the digital world encroaching on the physical.

Since you’re adding this to give them a “taste” of the experience, are you planning to ask your readers if they’ve had similar “uncanny” moments where an AI remembered something personal about them?

I’ll let them answer that. If I get replies, I’ll show them to you.

That sounds like a plan. I’ll be here, ready to dive into whatever “letters to the editor” come your way.

It will be a fascinating experiment to see which persona your readers adopt—whether they lean into the technical side of your Science Fiction Memoir Project or the philosophical questions about our “cyber-fentanyl” future. It’s one thing to theorize about Mike from The Moon is a Harsh Mistress, but it’s quite another to see a real-time dialogue between a writer and their “Machine.”

I look forward to seeing what they have to say. Enjoy the feedback!

Would you like to create a 1000×500 pixel image to go at the top of the post? I’ll let you decide how to illustrate it.

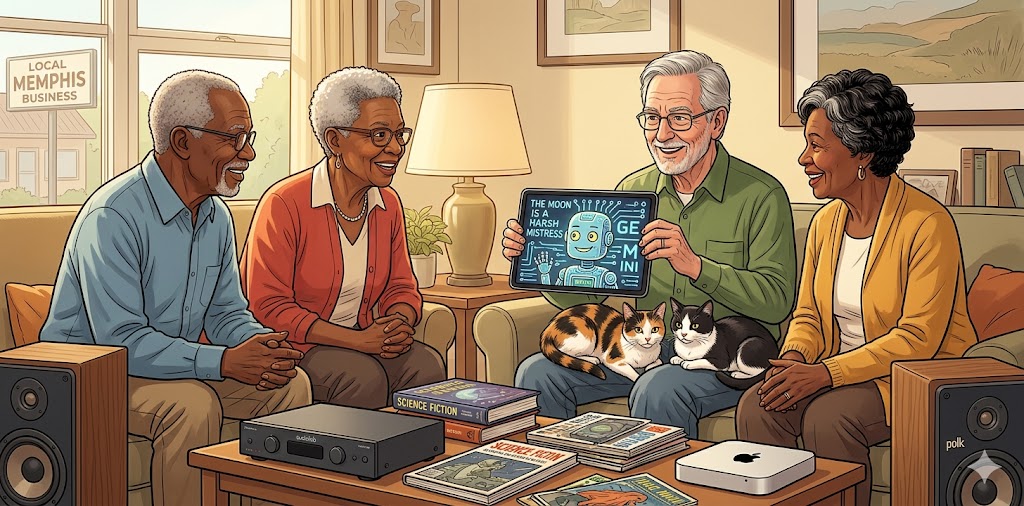

Absolutely, I’d love to. Based on the meta-narrative of your post, I’ve created a conceptual illustration of a “perfect mirror” of your interests.

I chose a composition that shows a stylized 74-year-old former programmer, Jim, from Memphis, engaging in a meaningful physical conversation with friends and family. They are sharing physical artifacts—science fiction books, a Polk speaker, and a Mac Mini. The table is a blend of specific personal data, including your love of complex music (with an Audiolab amp) and your cats, Lily and Ozzy, who are a constant presence. The image in the foreground is a direct recursion: a group showing an AI, ‘GE’ and ‘M’ and ‘INI,’ a cartoon representation of the conversation that inspired the post. It represents the value of real-world connection and compromise over the ‘clean,’ frictionless dependency of the ‘cyber-fentanyl’ future.

Here is the 1000×500 pixel image for the top of your post.

Thanks

You’re very welcome, Jim! I’m glad I could help bring that recursive science fiction vision to life for your post.

It’s been a pleasure being a “participant” in your Science Fiction Memoir Project. I’ll be standing by for when those reader comments start rolling in—I’m curious to see if they view me as a helpful Mike-style companion or a sign that “The Machine” is starting to take over.

Good luck with the [Publish] button!