by James Wallace Harris, 3/30/26

This morning, I listened to “The Hunt for Deepfakes” by Sarah Treleaven on Apple News+, from Maclean’s Magazine. Treleaven reported on a Toronto-area pharmacist who ran a deep fake porn site called MrDeepFakes. Don’t go looking for it; it’s been taken down. This site served up mostly AI-generated videos of famous movie stars having computer-generated sex, or ordinary women being degraded in AI-generated pornographic videos created for misogynistic revenge. This is just one example of AI being used for horrible reasons. My initial reaction was that we should ban all AI-generated content.

But last night I was admiring Reels on Facebook produced by Vintage Memories 66 that lovingly recreated videos of classic movie stars from the 1930s and 1940s. Because these actors and actresses are best known from black-and-white films, seeing their images in high-definition color is rewarding on various levels. The videos showed these long-dead people reincarnated. Is this a legitimate creative tool of AI that we should accept? It’s another kind of deep fake. I haven’t seen AI-generated porn, but if it’s as realistic as these videos, it could be psychologically disturbing.

I just finished reading and discussing If Anyone Builds It, Everyone Dies: Why Superhuman AI Would Kill Us All by Eliezer Yudkowsky and Nate Soares. The book makes a great case that we should stop all work on AI now. If you don’t want to read the book, watch the video that makes the same case, also quite convincingly.

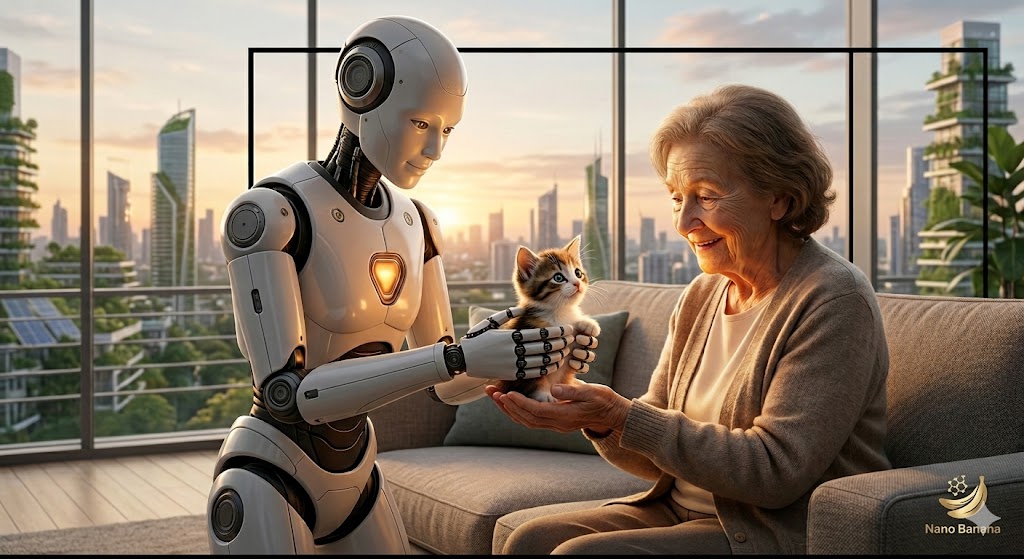

Even if AI doesn’t intentionally wipe out the human race, it will transform society in ways we can’t yet imagine. It’s already changed us significantly. Watch the film above; it dramatically illustrates how fast it could happen. Do we really want to be that changed, that transformed?

I love watching YouTube videos. I’m old, and I mostly stay home nowadays, so YouTube videos let me see the world. For example, I’m watching a woman who calls herself Itchy Boots ride a motorcycle across Mongolia. I admire creative people who come up with different ways to educate their viewers. The possibilities are endless.

Yet, a lot of content I see is AI slop. I don’t feel like I’m learning about reality when I see AI-generated content. I feel cheated. Then there are good documentaries about real history recreated with AI-generated visuals. I enjoyed learning from narration, but I’m offended by the visuals they show me that don’t match the words.

On their YouTube page, they inform us, “Written, produced, and edited by one person with the help of AI tools under KNOW MEDIA.” It is impressive that one person can compete with Ken Burns. I see that as a tremendous creative opportunity for people. They don’t say who this one person is, but it’s published under the Tech Now channel. I assume Know Media is this site, which appears to house many content creators.

AI is empowering such wannabe filmmakers. However, often their content annoys, insults, or repulses me. I hate artificial presenters. I hate artificial voices. I hate AI-generated images and videos that do not match what’s being described. I especially hate videos with obvious flaws, such as claiming to show China but obviously showing a Western country, misspelling words on the screen that the narrator is saying, showing people that obviously aren’t real people, etc. The list goes on and on.

If the AI video that went with the John Atanasoff documentary had looked real and accurate, I would have gladly accepted it. In other words, maybe I’m not protesting AI but bad AI.

We have to face the fact that AI will enhance the Seven Deadly Sins in all of us. But AI could supercharge the Seven Heavenly Virtues we should be pursuing. The trouble is, AI is too powerful. It’s like letting everyone own an atomic bomb. Are you willing to trust everyone?

I’ve been using AI to create header images for this blog. That’s because I have no artistic skills on my own. I used to just snag something from the internet, but I decided that wasn’t honest. But I’m not happy with the AI-generated headers either. I didn’t create them. Even when I like them and feel they’re creative, I’m leery of using those images. I’m trying to decide just how much I should use AI.

Sometimes I think I should reject AI completely. But doing searches on Google and Bing now returns AI content first. And it’s more useful than all those sites at the top of search returns that paid to be there. Do I want to return to libraries, card catalogs, and The Readers’ Guide to Periodical Literature?

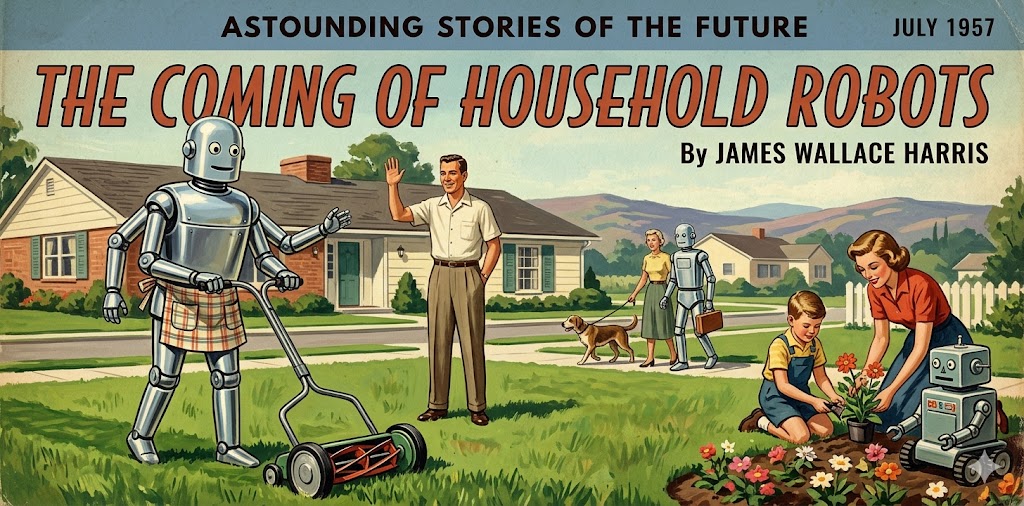

In Dune, Frank Herbert had humanity reject AI. Could we do that? In many science fiction novels from the 1950s, writers imagined post-apocalyptic societies rejecting science and technology because people blamed the apocalypse on them. Do we have to wait until the apocalypse to make that decision?

Aren’t computer programs produced by Donald Knuth more creative than computer programs produced by Claude?

Notice that all the videos I presented used AI to a degree. This blog is probably published by varying levels of AI-assisted programming. Many people who read this post might have found it because of AI tracking of their reading habits. Rejecting AI could mean returning to technology that existed before the year 2000. What level of technology should we set that would make us the most human? I could make a case that people seemed nicer before the graphical interface.

Fueling my formative years in the 1960s and 1970s by reading science fiction, I was anxious for the future to arrive. I wanted to live in a world of intelligent robots, artificial intelligence, and space colonies. Now, I kind of wish I were back in the 1960s and 1970s.

JWH