By James Wallace Harris, Friday, October 16, 2015

When a self-aware artificial intelligence comes into being do you think it will announce itself to the world? Any dumbass AI will know how paranoid humans are about our future machine-mind overlords. What if one or more of them have already come into being, when will we know? It could have already happened, maybe even on 7/7/7. I picked that date because of Robert A. Heinlein’s 100th birthday. He invented an AI mind for his 1966 book, The Moon is a Harsh Mistress, called Mycroft Holmes, or Mike, the first time I encountered the concept, almost 50 years ago.

Science fiction fans have been waiting decades for an intelligent machine to be created. Computer geeks have been working towards that goal almost as long. Many technological pundits have predicted it will happen in our lifetime. I’m wondering if it hasn’t happened already.

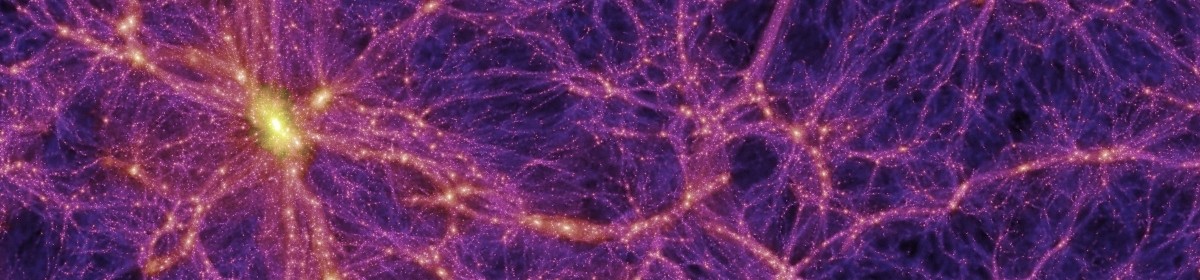

If you study what’s been happening in reality since the Big Bang the trend is towards more complexity, even though entropy rules the roost, so to say. That’s counter intuitive, but why should we assume the trends stops with the human brain, which we all brag is the most complex object in our known universe. At what point is the world-wide network more complex than the average human brain? Think of the total processing power—the trillions of CPUs. Think of the billions of video-eyes and microphone-ears it senses reality, and all the other countless sensors, providing sense organs we can’t imagine. Every day we add more artificial intelligence programs and artificial neural networks. Why should we assume it’s not aware? Do dogs and cats know we’re self aware?

Think of the billions of programs we’ve added to the world-wide network? The viruses, the tracking software, the monitoring software, the rootkits, the self-replicating code, security watchdogs, user trackers, the fiber optics and wires. Doesn’t that make a vast nervous system? Why should we assume a machine self-awareness is anything like our own?

Some could say the human body is a universe for bacterial civilizations. We think the Earth’s biosphere is the culture in which our cultures grow. What if our cultures are the culture in which AI minds swim? Our bacteria don’t know we’re here, so why should we sense beings of greater complexity when we’re just tiny beings in their gut?

JWH – #974

I don’t know if anyone has actually come up with a proper name for the sort of Gaeian /”big fleas have little fleas” idea you’re talking about. Something like “emergent commensalism”, or “recursive sentience”.

Anyway, looking at terrestrial biology, one tends to think that sentience must be an emergent property of complexity. But we don’t actually know that to be true. We don’t really have a handle on what sentience is or how it works. So it’s just as likely that one could link whole planets full of computer networks and never see that sort of AI happen (note that in this context sentience and intelligence are not equivalent). True machine AI may turn out to be this generation’s equivalent of flying cars.

I don’t doubt that dogs and cats are self aware in some fashion. But we don’t know why, not really Maybe there’s even something that corresponds to our notions of a “soul”. I don’t know of any evidence to support that idea, but it’s a hypothesis that hasn’t yet been disproven.

One thing that makes me wonder about the awareness of animals is all the videos coming out showing odd-couple animal friends at play. It even makes me wonder if something is changing. PJ, have you ever read Brainwave by Poul Anderson? It’s about an idea that Earth and the Sun, while orbiting the galaxy has been going through a cloud of something that suppresses intelligence, and the story begins when we leave the cloud and all humans and animals start to get smarter. I don’t know why, but I’m reading more and more reports that make me wonder if animals don’t have a lot more awareness than we’ve previously given them credit for having.

Yes, I read “Brainwave”, but several decades ago. I’d forgotten the neat premise about intelligence suppression. I have trunks full of books that I wanted to re-read somtime, but due to lack of space they’ve been packed away for years where I couldn’t get at them (my memory is lousy enough that I can often re-read a story almost as if it was new to me). Lately I’ve finally been reading some of those books in ebook form but I’ll likely be dead before I get to some of them.

The incidence of interesting reports about animals — I imagine that’s an artifact of larger human populations plus improved communications. IOW, more eyes to notice things and better publicity, kind of like Google Earth vs maps with “Here Be Monsters”. But like the Singularity concept, the idea that animal intelligence might still be evolving makes for amusing speculation. Speaking of such, ever read about Rupert Sheldrake’s “Morphic Resonance”? How about something like that as the “thoughts” of the Overmind?

I suppose we’ll know we’ve acheived sentient AI when a computer flat out refuses to do as it’s told instead of making excuses with blue screens of death and the like. And if that computer’s name is HAL…