Will we see self aware robots in our lifetime? At least the most rabid proponents of the Singularity think so. Science fiction created the idea of space travel, and now humans travel in space. Science fiction’s next big speculation about first contact hasn’t panned out yet. Neither has time travel. But after those concepts came robots, and science fiction has prepared us well for that near future.

Are we ready for thinking machines? How will our lives be different if intelligent robots existed? I think it’s going to be a Charles Darwin size challenge to religion, especially if robots become more human than us. And by that I mean, if robots show greater spiritual qualities, such as empathy, ethics, compassion, creativity, philosophy, charity, etc. Is that even possible? Imagine a sky pilot android that had every holy book memorized along with every book ever written about religion and could eloquently preach about leading the spiritual life.

Just getting robots to see, hear and walk was a major challenge for science, but in the last decade scientists have been evolving robots at a faster pace. It’s an extremely long way before robots will think much less show empathy, but I think it’s possible. I think we need to be prepared for a breakthrough. Sooner of later computers that wake up like in The Moon is a Harsh Mistress, When H.A.R.L.I.E Was One, and Galatea 2.2 will appear on the NBC Nightly News. How will people react?

There are two schools of robot building. The oldest is we program machines to have all the functions we specify. The other is to create a learning machine and see what functions it acquires. As long as robots have function calls like

show_empathy()

does it really count as true intelligence? I don’t think so, but do we show empathy because it’s built into our genes or because we learn it from people wiser than us?

Jeff Hawkins has theorized that our neo-cortex is a general purpose pattern processor built in our brains. What if we could build an artificial neo-cortex and let robots grow up and learn whatever they learn, like how people learn. Would that be possible? This is why I see artificial intelligence as a threat to religion in the same way evolution threatens the faithful. If we can build a soul it suggests that souls are not divine. It also implies souls won’t be immortal because they are tied to physical processes.

Science fiction has often focused on either warnings about the future, or promises of wonder. Stories about robots are commonly shown as metal monsters wanting to exterminate mankind. Other writers see robots as being our allies in fighting the chaos of ignorance. Other people don’t doubt that intelligent machines can be built, but they fear they will judge us harshly.

What if we create a species of intelligent machines and they say to us, “Hey guys, you’ve really screwed up this planet.” Is it paranoid to worry that their solution will be to eliminate us. Is that a valid conclusion? Life appears to be eat or be eaten, and we’re the biggest eaters around, so why would robots care? In fact, we must ask, what will robots care about?

They won’t have a sex drive, but they might want to reproduce. They should desire power and resources to stay alive, and maybe resources to build more of their kind, not to populate the world, but merely to build better models. Personally, I’d bet they will quickly figure out that Earth isn’t the best place for their species and want to claim the Moon for their own. I think they will say, “Thanks Mom and Dad, but we’re out of here.” Our bio rich environment is hard on machines.

Once on the Moon I’d expect them to start building bigger and bigger artificial minds, and develop ways to leave the solar system. I’d also expect them to get into SETI (or SETAI), and look for other intelligent machine species. Some of them would stay behind because they like us, and want to study life. Those robots might even offer to help with our evolution. And they might expect us to play nice with the other life forms on planet Earth. What if they acquire the power to make us?

On the other hand, people love robots. If we program them to always be our equals or less, I think the general public will embrace them enthusiastically. Many people would love a robotic companion. Before my mother died at 91, she fiercely maintained her desire to live along, but I often wished she at least had a robotic companion. I know I hope they invent them before I get physically helpless. Would it reduce medical costs if our robotic companions had the brains of doctors and nurses and the senses to monitor our bodies closely?

My reading these past few months has been a perfect storm of robot stories. I’m about to finish the third book in the Hyperion Cantos by Dan Simmons, which has developed into war between the Catholic Church and the AI TechnoCore. I’m rereading The Caves of Steel, the first of Asimov’s robotic mystery novels. I’m also reading We Think Therefore We Are, a short story collection about artificial intelligence.

Last month I read the Martian Time-Slip by Philip K. Dick. At one point repair man Jack Bohlen visits his son’s school to fix a teaching robot. Each robot is fashioned after a famous person from history. That made me wonder if each of our K-12 students had a robotic mentor would we even be in the educational crisis that so many write about? Sounds like even more property taxes, huh?

Well, what if those mentors were cheap virtual robots that communicated with our children via their cell phones, laptops or gaming consoles. Would kids think of it as cruel nagging harassment or would they learn more with constant customized supervision? What if their virtual robot mentor appeared as a child equal to their age and grew up with them, so they were friends? Or even imagine as an adult and you wanted to go back to college, having a virtual study companion.

Now imagine if our houses were intelligent and could watch over us and our property. Wouldn’t that be far more comforting than a burglar alarm system? For people who are frightened of living alone this would be company too. And it would be better than any medical alert medallion. Think about a house that could monitor itself and warn you as soon as a pipe leaked or its insulation thinned in the back room. And what about robotic cars with a personality for safety?

For most of the history of robots people thought of them as extra muscle. Mechanical slaves. People are now thinking of them as extra intelligence, and friends. What will that mean to society? Anyone who has read Jack Williamson’s “With Folded Hands” knows that robots can love and protect us too much. Would having helping metal hands and AI companionship weaken us?

Can you imagine a world where everyone had a constant robot sidekick, like a mechanical Jeeves, or a Commander Data. Would it be cruel to have a switch “Only Speak When Spoken To” on your AI friend? Would kids become more social or less social if they all had one friend to begin with? Would it be slavery to own a self-aware robot? And what about sex?

Just how far would people go for companionship? I’ve already explored “The Implications of Sexbots.” But I will ask again, what will happen to human relationships if each person can buy a sexual companion? What if people get along better with their store-bought lover than people they meet on eHarmony? I’m strangely puritanical about this issue. I can imagine becoming good friends with an AI, but I think humping one would be a strange kind of perversion. I’m sure horny teenage boys would have no such qualms, and women have already taken to mechanical friends and might even like them better if they look like Colin Firth. To show what a puritanical atheist I am, I would figure this whole topic would be a non-issue, but research shows the idea of sex with robots is about as old as the concept of robots.

Ultimately we end up asking: What is a person? Among the faithful they like to believe we’re a divine spark of God, a unique entity called a soul. Science says were a self aware biological function, a side-effect of evolution. We are animals that evolved to the point where they are aware of themselves and could separate reality into endless parts. If that’s true, such self awareness could exist in advanced computer systems. Whether through biology or computers, we’re all just points of awareness. What if the word “person” only means “a self aware” identity? Then, how much self awareness do animals have?

Self awareness has a direct relationship with sense organs. Will robots need equal levels of sensory input to achieve self awareness? We think of ourselves as a little being riding in our brain just behind our eyes, but that’s because our visual senses overwhelm all others. If you go into a darkroom your sense of self awareness location will change and your ears will take over. But it is possible to be your body. Have you ever notice that during sex your center of awareness moves south? Have you ever contemplated how illness alters your sense of awareness? Meditation will teach you about physical awareness and how it relates to identity.

Can robots achieve consciousness with only two senses? Or will they feel their electronics and wires like we feel our bodies with our nerves? Is so, they will have three senses. We already have electronic noses and palates that far exceed anything in the animal world. We only see a tiny band from the E-M spectrum. Robots could be made to “see” and “hear” more. Will they crave certain stimulations?

We know our conscious minds are finely tuned chemical balances. Disease, drugs, and injury throw that chemical soup recipe of self awareness into chaos. How many millions of years of evolution did it take to tune the human consciousness? How quick can we do the same for robots? Would it be possible to transfer the settings in our minds to mechanical minds?

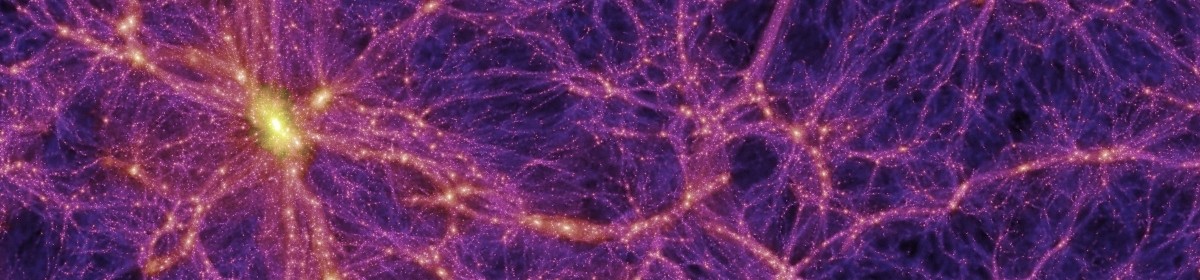

There are many people living today that refuse to believe our reality is 13.7 billion years old. They completely reject the idea that the universe is evolving and life represents relentless change over very long periods of time. Humans will be just a small blip on the timeline. What if robots are Homo Sapiens 2.0? Or what if robots are Life 2.0? Or what if robots are Intelligence 2.0? Doesn’t it seem strange when it time to go to the stars that we invent AI? Our bodies aren’t designed for space travel, but robots are.

In Childhood’s End and 2001: A Space Odyssey, Arthur C. Clarke predicted that mankind would go through a transformation and become the star child, our next evolutionary step. What if he was wrong, and HAL is the next step? We are pushing the limits of our impact on the environment at the same time as we approach the Singularity. I’m not saying we’re going extinct, although we might, but just wondering if we’re going to be surpassed in the great chain of being. Even among atheist scientists humans are the crown of creation, but we figured that was only true until we met a smarter life form from the stars or built Homo Roboticus.

JWH – 3/11/10

Interesting, Jim. Lots of things to think about. But note that we human beings are animals. It’s not just that we’re descended from animals, we ARE animals. We have instincts, emotions, automatic responses of all kinds.

I’ve often wondered – without a flesh and blood body, would it even be possible to fear? If your mind could be put into a robot’s body, could you be afraid, without the biological mechanism for feeling fear? I’m pretty sure it works in the opposite direction, that you could be made to fear just by manipulating the responses your body makes at such times. So can we really separate our human minds from our human bodies?

Robots (or artificial intelligence – I suspect that such creatures would have no concern for a body at all) might be able to think someday, but they won’t feel hunger, thirst, fear, love, lust, anger, joy, embarrassment, or anything else from our animal natures, unless it is deliberately programmed into them (and then it would just be an imitation, an approximation).

So why would they want to reproduce? Why would they “want” anything? Why would they hate or love people? Why would they kill or save us? None of this would apply unless it was programmed into them. Programmed emotions wouldn’t be any less “real” than our own, which are a product of flesh and blood bodies that evolved to be this way. But why would we program any, or certainly most, of our emotions into robots in the first place?

It’s hard to think about robots without imagining them as human beings, with at least SOME human emotions and human feelings. But I can imagine self-aware artificial intelligence working for human beings – but not happy about it, not content, not frustrated, not bored, not angry, not wistful, not ANYTHING, just without any emotions or thoughts that aren’t directly related to what it is doing. Yet still “intelligent,” in some theoretical kind of way that we might not understand at all.

A very interesting article indeed – it brought to mind a boat-load of stories I’ve read over the years, as well as the occasional scientific article. I think you’re postulating man crossing the line from created to creator, something we’ve already done in part through breeding animals for various characteristics, to today’s genetically modified foods. Although we haven’t expected the intelligence of creativity, decision, desire or partnership from our creations, the question of whether our creations might surpass us, and the consequences if they do, is one well worth considering.

I‘ve read (a true account) about a robot which can move about at will in its lab, and record what it observes from different standpoints. I suspect that self-awareness could evolve from this – if it understands that the person who is now standing where it was yesterday, is seeing and hearing different things than it recorded then; eventually it understands that no other being or creation is having the same experiences it is, or had, and there we are at self-awareness. My sister thinks this is much too big a leap for any machine to make.

I would say that Asimov – perhaps inadvertently – addressed religion with his 3 laws of robotics, in making safety of humans the paramount guide. Although for now it is simply a function for robots, it may one day be understood by them as the concepts of compassion, charity, etc. Would intelligent machines find any value in emotions or a spiritual life? Hard to believe, once they’ve analyzed the destruction of “religious wars”.

What do you imagine would cause computers to “wake up”? Would the command to show an emotion be equivalent to the real thing? Or is this in the eye of the beholder? If their emotional reaction is taken at face value, the human would react with more emotion – I believe such biologically based functions would be too complex for any machine to understand, although I don’t doubt they could be complexly programmed to mimic “appropriate” responses for a brief time, before bogging down. Throughout history humans have struggled with emotions which have been part of us since birth – something so difficult many people dream of an emotionless world – we even have a fascination with beings who have no emotions such as Mr. Spock and Data (who ironically yearns for emotions to become human, whereas Mr. Spock does all he can to suppress them.)

Japan has been using robot companions for the elderly for some time, and it’s a great surprise to me that seniors report liking it very much. Yet why do some people prefer less intelligent animal companions? Is it due to their emotions? Animals have already been shown to be effective “therapists” (physical, mental, and emotional) for a variety of human problems. I note with alarm that many people say their Roomba automatic vacuum is a friend they look forward to “interacting” with every day!! We still consider those who few emotional interactions to be stunted – and recall how many mass murderers are defined as “loners”. Can a simple machine or untrained pet keep the human on the sane side of reality?

Robot mentors for the young? I vote a resounding “NO!” I can remember when the first recorded books came out – it was thought young children could ‘read to themselves’. These books were of little interest – the children actually craved human input, and expansion on the book even when they weren’t young enough to ask questions. Today we have evidence that one-way communication such as videos do more harm than good, creating only partial understanding, and short attention spans. I don’t believe machines could ever impart curiosity, initiative, or other emotion-based actions, as they have no experiences of their own to impart. These are characteristics we’ve chosen to define the human race – inventors, explorers, and philosophers. Without self-awareness I question whether robots can even teach deductive reasoning. Of what value is all the knowledge in the world if it’s not put to use? The current day counterpart is constant use of cell phones or computers – talking about an experience is not the same have HAVING the experience, and in my view robots couldn’t absorb what we do from the experience. In essence, it would be a world without art, although many have postulated that robots can be programmed to be creative. But part of the creative process is enjoyment…

Without emotions, how could robots understand the finality of death, or the horror of a total life-long disability? If robots were existing at a human-superior level, craving more implies enjoyment. Why do we enjoy things, and some more than others? Some prefer the beach, others the mountains; some days you prefer a blue shirt over a white one. What drives these preferences? And since 99% of the time the results of these types of personal preferences affect only the minor emotions of the individual, what would be the attraction for the robot?

Will mechanical self-awareness equal slavery? I don’t think self-awareness equates with initiative, I think there’s a big gap between those 2 concepts. A robot could be self aware in monitoring its functions, but that doesn’t imply it has desires or goals. What do YOU think would be the advantages of a self-aware machine?

Do you imagine robots only as entities separate from humans? Would most people want to be part machine if the “reward” was increased intelligence? (Answer – look how many people still resent the need for glasses or hearing aids, in spite of the huge benefits.) We’ve embraced the computer, but usually not for its information – rather, its games.

Is the “private”, “personal” definition of “me” at a similar level for most people? Is it inborn or learned? Humans crave change, even to a point of endangering lives – I don’t think change for the sake of emotions such as curiosity or satisfaction is a concept robots could embrace.

Is human self-awareness a mere evolutionary quirk? Surely the herd mentality is a strong successful one on planet Earth. Is self-awareness inevitably linked to a soul? My religious instruction left me believing that a soul was created by mental processes – CHOOSING to do “right”, or wrong – only reactive emotions involved, afterward – not required for the soul to grow.

Of course you’re aware there have been many stories about machines which destroy man in protecting him from himself. With our current governments around the world, I think it’s too easy for protective robots to become tools of the government for control of people. For example, cars could be made with a top speed of 40 MPH, but this may not be enough to escape various situations. Or as a more concrete example, many people now oppose the RFID chip which may soon become required, or used without our knowledge, under the guise of (only) benefits for all.

I agree that robots may quickly discover other planets are much more suited to them than earth, particularly if they choose, or are programmed, to work independently, in jobs that aren’t solely to support humans. Perhaps independent thinking would eventually evolve in a human-free society.

Thanks for the cerebral workout! Which recent robot story have you enjoyed most, and in what aspect?

To answer your last question first. By far and away I loved The Naked Sun best, but I don’t know if it’s the best story about robots. The whole story was just so involving. I just finished Endymion, the third of Dan Simmons Hyperion Cantos series. It has androids and AIs of all sizes, yet they all feel too human. I need to find some stories where writers have AI or robots telling their story in first person. Becky, do you know any of such stories? Right now I’m reading Lady Chatterley’s Lover and D. H. Lawrence is great at exploring people’s motivations. It’s amazing what he points out. We need a D. H. Lawrence to imagine what will motivate robots.

When we look close at what motivates us we can usually find it’s origins in biology. But I don’t know if that’s true for our self awareness. When you ask, “What do YOU think would be the advantages of a self-aware machine?” – do you mean to us, or to them? Like you suggest, they could be aware but lack motivation. I keep going back to the D. H. Lawrence book. At one point he’s comparing the motivations of various men, and he reviews those men who pursue the intellectual life. One of the characters is an astronomer and he says he has the stars to movitate him. But Lawrence goes deeper. He says we use intellectual ideas to compete with one another, to make our identity, often in petty ways. Of course this is analogous to the biological impulse for males to fight in front of females. If robots become self aware will they have any kind of motivation? Will we have to hardwire their desires?

I’m afraid I have to get ready and go to work, so I must return to your reply later and write more.

Jim, you might be interested in this post, “What Does a Robot Want?”

http://www.shamusyoung.com/twentysidedtale/?p=7556

This is quite similar to my own thoughts, as I replied here earlier. When I read this recent effort by Shamus Young, I thought about commenting on my own blog. But as you can imagine, I’m swamped with things to write about already!

Briefly, we can’t even reliably define “intelligence” when referring to human beings, and I suspect that “artificial intelligence” will be even more difficult. Will robots have needs, or at least wants, at all? Only if we program them that way. And why would we? Even Asimov’s 3 laws could be dangerous.

Bill

READ the novel Computer One by Warwick Collins!

that novel shows you in a very scientific accurate and logical way of how a new awakened AI or robot would behave after becoming sentient or self aware and how it will treat humanity.

also for this i recommend you GURPS Reing of Steel by David L. Pulver and this analysis of the AI Skynet after becoming sentient and why it declare war against humankind:

http://www.goingfaster.com/term2029/skynet.html

http://www.goingfaster.com/term2029/ascensionp1.html