What if people weren’t the crown of creation? What if we had to play second banana to Humans 2.0, AI machines, visiting aliens, cyborgs or other potentially smarter beings? I think our fear is they would treat us like we have treated chimpanzees. What if intelligent machines emerge, homo sapiens superior evolve and we make SETI contact, and suddenly we’re number four on the totem pole of intelligence?

Unless we destroy the planet and make ourselves extinct, sooner or later we’re going to be replaced at the top of the smart chart. How will that effect us personally, our society, and how we think about our future? Most primitive cultures when contacted by modern humans haven’t fared well. Science fiction has been preparing us for centuries, but I’m not sure if science fiction has done a good enough job covering all the possibilities.

Possible Replacements

It doesn’t take a lot of time to think up possible replacements who could claim our throne as being the smartest beings on the planet.

- Genetically enhanced humans

- Naturally evolved humans

- Artificial beings

- Cyborgs

- Uploaded humans

- AI super computers

- Robots

- Androids

- Alien visitors

- SETI contact

I’m not sure if we’re not already seeing a natural selection in our species. Our severely polarized society, divided between liberals and conservatives, between the scientific and the religious, between the secular and the sacred, might already be moving us towards separate species. The conservative fraction that clings to the past is becoming anti-intellectual and anti-education. If the scientific minded only breed with the scientific, won’t they produce a line of smarter humans? Of course natural selection doesn’t always produce successful adaptations. Some people have suggested the rise of autism comes from overly smart people mating with other overly smart people. It might turn out that intelligence isn’t an important trait, or one vital for survival.

Then there is genetic engineering. Think of the movie Gattaca, the old classic Brave New World, or Beggars in Spain by Nancy Kress. We’re getting very close to making customized homo sapiens sapiens. In just a few generations we could have a new species that make us look outdated. Gattaca was a salute to the natural human, but was it realistic? We loved Vincent for competing and winning, but can humans really compete with super humans? Again, we’re assuming that intelligence is trait that wants to win out.

We might even be doing something now that will lead to a more naturally evolved humans. As more women select Caesarian sections for childbirth, we’re changing an important factor that might lead to change. Our brain size has always been limited to the size of the birth canal – now its not. Over time we might see new adaptations. Read Greg Bear’s Darwin’s Radio.

Work with our genome has shown that DNA is an erector set for building biological machines. How soon before we start creating new recipes? Whole new artificial beings could be created, or animals could be uplifted to human intelligence and beyond. Think about the science fiction of Cordwainer Smith and H. G. Wells The Island of Dr. Moreau?

Google Glass might be our first step toward becoming cyborgs with auxiliary brain power. Wearable computers, artificial limbs and senses could lead to supercharged brains and all those science fiction scenarios where people jacked into machines.

I’m not a big believer in uploading brains into computers, but a lot of people are. Now that my body is getting old and failing, the idea is becoming more appealing. People like Ray Kurzweil hope to find immortality this way, and such ideas have been the theme of many SF stories. Sometimes those stories are wished for fantasies, and sometimes they are feared nightmares.

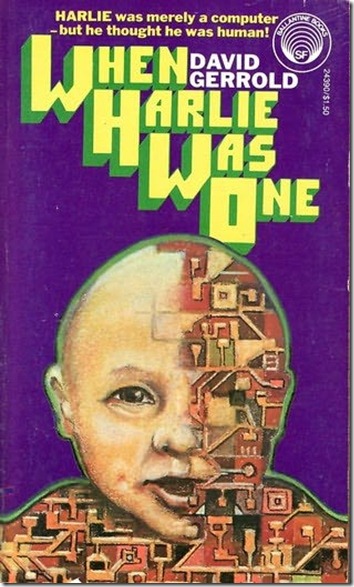

What I’m waiting for is the technological singularity. AI super computers should be just around the corner if I can live long enough. Many people fear AI minds with stories ranging from “Press Enter _” by John Varley to the latest movie Transcendence, but I’m hoping machine minds will be benign, or even indifferent to humans and animal life.

Who Do You Want To Do Your Brain Surgery?

If after we get bump down the intelligence list, how is that going to change society? If you need brain surgery would you want a human or post-human holding the scalpel? Or would you prefer an AI mind that is 16 times smarter than a person? If a human and a robot were running for President, who would you vote for? Liberals like smart dudes, but conservatives don’t. They like old friendly duffers like Ronald Reagan. But what if the robot had the combined intelligence of all of Congress, the Supreme Court and every CEO in America?

We’re already designing smart cars to drive us because it will be safer, and we already have planes with automatic pilots, how long before we have machines doing everything else for us? Will we just sit around and eat bon-bons?

If we share Earth with beings more intelligent than us, won’t we ultimately let them run things? What if they were smart enough to tell us how to handle global warming so we suffered the least, paid the least, but got the maximum benefits from changing our lives, thus making the Earth’s biosphere more stable? What if they gave us wealth and security, and protected all the other species on the planet as well? Would we say, hell no! Would we say we prefer to take our chances with failure just so we could make our own decisions?

Democracy v. Plutocracy v. Oligarchy v. Cyberocracy

We like to think we currently rule ourselves through collective decision making, but more than likely, we could already be an oligarchy or plutocracy, ruled by a limited number of rich people. What if we could create powerful super computers that ruled us politically and ran the economy? Would you prefer to be ruled by a handful of rich people, or a handful of smart machines? Remember who flies your 787 now. This idea scares the hell out of most people, but just how smart was George Bush at running the country, or how much better is Barack Obama, who most people would say is brainer? What if decisions about taxes weren’t made by people filled with emotions? What if we told the machines to maximize freedom, minimize taxation, maximize security, health and wealth, minimize pollution and environmental impact, and so on, and then just let them figure out the best way.

What If Post-Humans and Robots Are Atheists?

How will ordinary humans feel if their replacements reject God? What if massive AI brains see nothing in reality to validate religion? What if SETI aliens, say “What is a God?” One of the common traits of western civilization impacting newly discovered primitive people is their demoralization of losing their gods. Look what Europeans did to the Native Americans. How are we going to feel when we’re invaded by post-humans and intelligent machines? Will they make us move onto reservations?

The Art and Science We Can’t Imagine

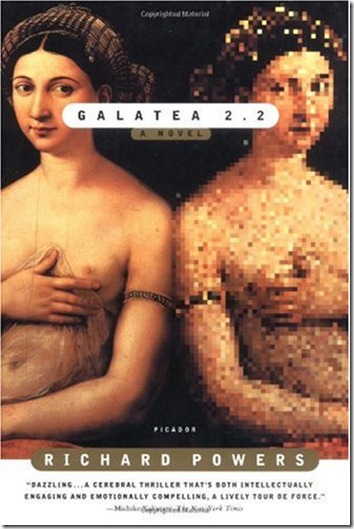

What if our minds cannot feel the art and understand the science of our intellectual descendants? We can look back over thousands of years, to what our ancestors have imagined, built and perfected, and understand what they created. We know them because we’re an extension of who they were. When greater minds come after us, they will understand us, but will we know them? At what point will we no longer be able to follow in their footsteps? Whether we like it our not, our brains have limits. We’ve always been used to exploring at the edge of reality, so what happens when we become aborigines to beings who see us as the first beings, and they are the later ones? The ones who leave us behind.

Getting a PhD

Of course, being a scientist might not be as much fun if you had to compete with Human 2.0 folk, or AI minds. Vincent in Gattaca pushed himself to inhuman efforts to compete against gene enhanced humans, but I’m not sure most people would do that. AI minds could do a literature search for a PhD and distill the results in no time. They would probably inherently know how to create and test a hypothesis, set up the experiments and research, and since they’d have math coprocessors in their brains, instantly do all the statistics. Could any Human 2.0 or 3.0 individual compete with AI minds that are 16 or 64 times as smart as a Human 1.0 is now?

Life as a Lap Dog

If we couldn’t be the top dog, would we want to be a lap dog? Or would we want to live like the Amish and exclude ourselves from the future modern world? Can you imagine a mixed society of Humans 1.0, Humans 2.0, AI minds, robots, cyborgs, androids, uplifted animals and artificial beings all coexisting happily, or even roughly happy? We don’t get along well with each other now, and we haven’t been too kind to our fellow animal citizens on this planet. But then, maybe we’re the problem.

I already know I’m not the smartest geek in the group now. I know I’m well down on the list of GRE scores. I’m not a boss or a leader. I’m not on the cutting edge of anything. And most people are like me. I putter around in my small land, ignoring most of the world. Maybe that’s why I’m not scared of being replaced at the top, because I’m nowhere near the top.

You know, here’s a funny thing. If an AI robot walked up to you at a party, one that has the brain power of 64 humans, what would you ask it? What are you dying to know? Is there anything the robot could tell you that would drastically change your life? I’d probably say to it, “You read any good books lately?”

JWH – 4/23/14