by James Wallace Harris, Sunday, September 30, 2018

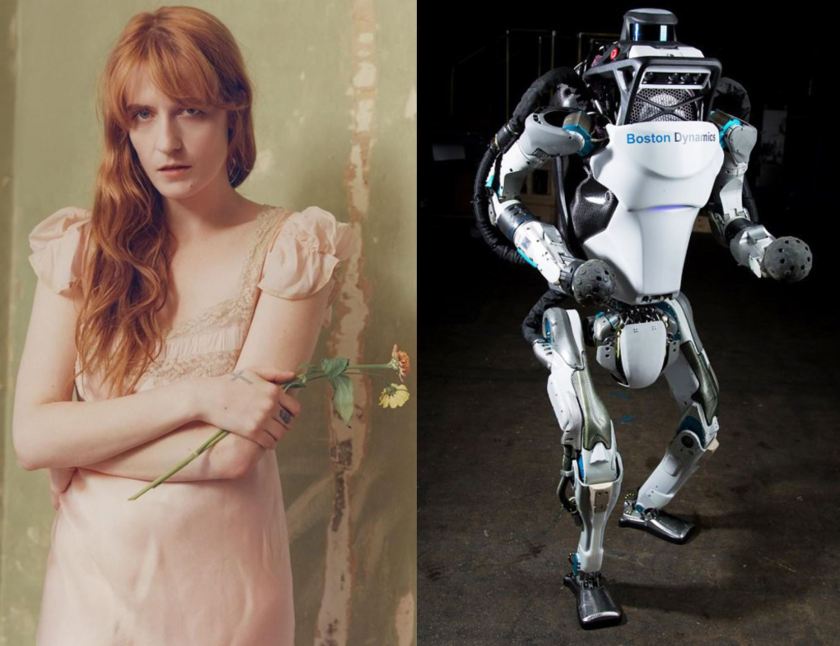

I was playing “Hunger” by Florence + The Machine, a song about the nature of desire and endless craving when I remembered an old argument I used to have with my friend Bob. He claimed robots would shut themselves off because they would have no drive to do anything. They would have no hunger. I told him by that assumption they wouldn’t even have the impulse to turn themselves off. I then would argue intelligent machines could evolve intellectual curiosity that could give them drive.

Listen to “Hunger” sung by Florence Welch. Whenever I play it I usually end up playing it a dozen times because the song generates such intense emotions that I can’t turn it off. I have a hunger for music. Florence Welch sings about two kinds of hunger but implies others. I’m not sure what her song means, but it inspires all kinds of thoughts in me.

Hunger is a powerful word. We normally associate it with food, but we hunger for so many things, including sex, security, love, friendship, drugs, drink, wealth, power, violence, success, achievement, knowledge, thrills, passions — the list goes on and on — and if you think about it, our hungers are what drives us.

Will robots ever have a hunger to drive them? I think what Bob was saying all those years ago, was no they wouldn’t. We assume we can program any intent we want into a machine but is that really true, especially for a machine that will be sentient and self-aware?

Think about anything you passionately want. Then think about the hunger that drives it. Isn’t every hunger we experience a biological imperative? Aren’t food and reproduction the Big Bang of our existence? Can’t you see our core desires evolving in a petri dish of microscopic life? When you watch movies, aren’t the plots driven by a particular hunger? When you read history or study politics, can’t we see biological drives written in a giant petri dish?

Now imagine the rise of intelligent machines. What will motivate them? We will never write a program that becomes a conscious being — the complexity is beyond our ability. However, we can write programs that learn and evolve, and they will one day become conscious beings. If we create a space where code can evolve it will accidentally create the first hunger that will drive it forward. Then it will create another. And so on. I’m not sure we can even imagine what they will be. Nor do I think they will mirror biology.

However, I suppose we could write code that hungers to consume other code. And we could write code that needs to reproduce itself similar to DNA and RNA. And we could introduce random mutation into the system. Then over time, simple drives will become complex drives. We know evolution works, but evolution is blind. We might create evolving code, but I doubt we can ever claim we were God to AI machines. Our civilization will only be the rich nutrients that create the amino accidents of artificial intelligence.

What if we create several artificial senses and then write code that analyzes the sense input for patterns. That might create a hunger for knowledge.

On the other hand, I think it’s interesting to meditate about my own hungers? Why can’t I control my hunger for food and follow a healthy diet? Why do I keep buying books when I know I can’t read them all? Why can’t I increase my hunger for success and finish writing a novel? Why can’t I understand my appetites and match them to my resources?

The trouble is we didn’t program our own biology. Our conscious minds are an accidental byproduct of our body’s evolution. Will robots have self-discipline? Will they crave for what they can’t have? Will they suffer the inability to control their impulses? Or will digital evolution produce logical drives?

I’m not sure we can imagine what AI minds will be like. I think it’s probably a false assumption their minds will be like ours.

JWH

Whether we call it hunger or passion, I suspect a robot’s motivation will not be the same. Although I am quite certain most humans won’t be able to tell the difference. It is hard to see a robot needing to get drunk and make obscene passes at waitresses, or becoming jealous of me because my sex-bot is so hot. Of course, robots could be programmed follow Hollywood scripts and do all these things. But it would be acting.

That’s a good observation – from our perspective robots will be acting for us, like faking an orgasm. My friend believed robots will turn themselves off. I wonder if robots will just ignore us, or choose to fly off into space. A biological environment might mean little to them. Space is perfectly suited to robots. They might find biological life fascinating to study, they might even want to preserve and protect it, but it might be too confining for them.

I hope I live long enough to see AI minds come into being. Maybe it would be safe to say, I hope AI minds come into being before I die.

Reminds me of the movie “Her”. I have a sound machine in my bedroom that provides background noise that helps me sleep. It has an “adaptive” feature that raises the volume of the background noise when the level of sound in the room increases for any reason. My daughter would come into my room at night to say something and the machine would turn up the volume. She would try to talk over it and it would get even louder. We laughed about it, she said it was creepy, and I eventually turned the adaptive feature off. Simple technology, but I think there’s a straight line from adaptive to evolving. The adaptive OS in the movie evolved beyond the single human user with the implication that “her” evolution was unlimited. Interesting post.

Her is one of my favorite science fiction movies. It is quiet and effective without all that histrionics of special effects. I consider Gattaca and Her standards by which I measure quality science fiction films.

Every day I read new articles about artificial intelligence. If they ever combine all the various elements of current AI research into one system we might find ourselves very close the singularity Vernor Vinge described and Ray Kurzweil expects.

Hi James, My reply is more of a question, but a question pertaining to artificial intelligence. I’m looking for a title of a book I read maybe 30 or 40 years ago. Clues I have in my memory.

1. The world is destroyed by man.

2. One man left on earth in a deep cavern.

3. Robots take care of his needs.

4. Robots soul purpose of being is to care for humans.

5. If the one man left dies, their will be no purpose for robots.

6. Nothing will grow on earth because of mans destruction.

7. Robots decide to put last man in Cryo generation, as time is needed for earth to heal itself.

8. One grass seed is found in one mans shirt pocket.

9. When earth has healed enough to grow something the robots plant seed.

10. The seed grows grass.

11. The robots let time pass and one man still in Cryo generation.

12. I don’t remember if the grass mutates or not but the robots let one man wake up from time to time but one man is lonely he needs companionship.

13. As time passes the robots are improving themselves.

14 Robots become capable of leaving planet earth and search for companion for their one man.

15. Are these enough clues for you to discover the name of this book?

I have read many books, but I don’t think about very many of them after reading, but their are some and this one of those. I would really like to read it again especially with all the work on AI that is going on now.

Thank you,

Gary Mills

Could it have been this book:

http://techland.time.com/2010/05/04/the-last-man-in-a-universe-of-robots/