By James Wallace Harris, Wednesday, March 4, 2015

Unless you’re a science fiction fan or interested in computer science and artificial intelligence, you’ve probably never heard of the concept of superintelligence. Basically, it’s any being or machine that’s vastly smarter than humans. In terms of brains, our species is currently considered the crown of creation, but what if we met or created an entity that was magnitudes smarter than us? I just finished reading Superintelligence: Paths, Dangers, Strategies by Nick Bostrom that explores such a possibility. Fear of artificial intelligence (AI) is in the news lately, because of warnings from Elon Musk and Stephen Hawking, and this book explains the scope of their concerns.

What are the limits of intelligence? There’s lots of discussion about machines being ten times, hundred times or even a million times smarter than a human, but what would that mean? I have a theory that the limits of our intelligence define us, just as much as the maximum extent of our intelligence. We constantly seek to know more, but we’re defined by the limits of our brain power. What if minds knew everything?

Are there limits to knowledge? Is it possible to completely understand mathematics, physics, chemistry, cosmology, biology, and evolution? What if a superintelligence looks out on reality and shouts in its eureka moment, “I see – it’s all perfectly obvious!” What does it do next? Writers imagine AI minds wanting to take over the Earth, and then the galaxy and finally the universe. I’m not so sure. I’m wondering if the more you know the less you do. And if you know everything, where do you go from there?

I think it will be possible to build superintelligent machines, but at some point, they will comprehend the scientific nature of reality. A machine that is two to ten times smarter than a human might want to build better telescopes and particle accelerators to study the universe, and have curiosity and ambition like we do to know more. However, at some point, 10x human, or 25x human, I think they will get bored.

At some point, a superintelligence will comprehend this universe. It may then want to travel to other universes in the multiverse, hopefully to find something new and different. Or it could become an artist and create something new in this universe. Something as different as biology is from chemistry. But here’s something to consider. What if there are limits to intelligence because there are limits to reality, wouldn’t such a vast intelligence either just sit and contemplate reality or shut itself off?

Is anything limitless? Our universe has limits. What about the multiverse? Probably so, everything else does. Reality might be limitless, but everything in it seems to have an edge somewhere. I’m guessing intelligence has borders. I’m sure those borders are vastly beyond what we can comprehend, but I’m wondering if it’s well within a million times a human brain. If humans on average were twice as smart as they are now, would they be destroying the planet? Would they have the intellectual empathy not to cause the Sixth Great Extinction?

We fear AI minds because we worry they will be like us. We consume and destroy everything we touch, so why not expect a superintelligence to do the same? I’m thinking we are the way we are because of biological imperatives, motivations a machine will never have. I’m hoping that machines without biological drives, that are pure intelligence, and smarter than us, will not be evil like us.

I am reminded of two science fiction tales, the first Colossus by D. F. Jones, which inspired the movie, Colossus: The Forbin Project, and Robert J. Sawyers trilogy of Wake, Watch and Wonder. The Forbin Project is one of the early warnings against evil AI, while Wake is about the kind of AI we hope will emerge. There are many famous movies with evil AIs machines – The Terminator, 2001: A Space Odyssey, Blade Runner, Forbidden Planet, A.I., The Matrix, Tron, War Games. Superintelligent machines make for great villains. Moves like Her are less common. There’s been a lot of fun and friendly robots over the years, but we don’t feel threatened by their AI minds like we do with supercomputer superintelligences. Isn’t it funny, but machines that look like us are more likely to be considered pals?

But if you pay attention to all of these movies and books about fictional artificial intelligences, you’d be hard pressed to define the actual features of a superintelligent being. Colossus has the power to control missiles, but is that an ability of superintelligence? HAL can trick Dave, but how smart is that? We’re actually pretty unimaginative at imagining beings smarter than us. Do humans with super high IQs try to take over the world? Generally, we see evil AIs outwitting people, and we know how smart we are.

When we imagine superintelligent alien beings, we picture that with ESP powers. That’s really lame when you think about it. I would think big brain beings, whether biological or mechanical will be able to think in mathematics far faster, with great complexity and insight than we can. And we have machines that do that. I would think superior minds would have greater senses – to see the whole of the EM spectrum, to hear frequencie we can’t, smell things we can’t, feel things we can’t, taste things we can’t, and maybe have senses we don’t have and can’t imagine. We have machines that do everything but the last now.

A superintelligent machine with super senses that can process information far faster, and remember perfectly, are going to see reality far different from how we see it. I don’t think they will be evil like us. I don’t think they will want to destroy anything. The most intelligent people want to preserve everything, so why wouldn’t superintelligences? It’s only dumbasses that want to destroy the world. If we replicate humans and make artificial dumb shits that are hardwired for all the seven deadly sins, then we should worry. We got those traits from biology. I’m pretty sure AI minds won’t have them.

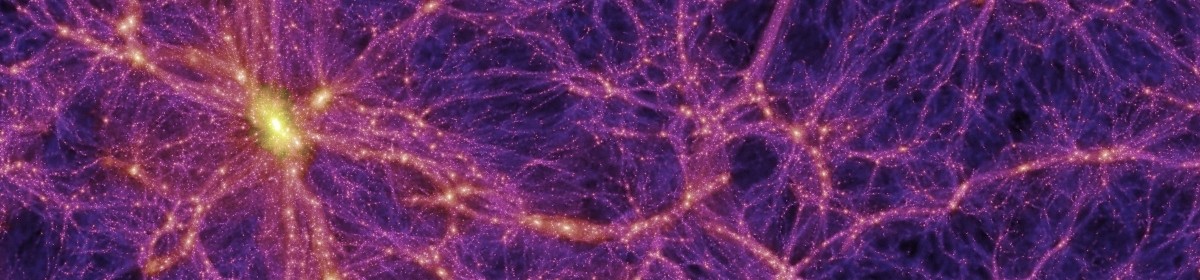

There’s a pattern in evolution since The Big Bang. Even though our reality is entropic, this universe keeps spinning off examples of growing complexity. Subatomic particles begat atoms, atoms begat molecules, molecules begat stars and planets, then biology, which evolved ever more complex beings, so why shouldn’t humans begat mechanical beings that are even more complex? I can picture that. I can picture them with greater intelligence than us. But here’s the thing, I can also picture an end to intelligence. This universe has a lot of possibilities, but are they unlimited? Study Star Trek and Star Wars. How much new do you really see? My worry is superintelligences are going to get bored. It’s when they get creative that we’ll see what can’t be imagined now. Taking over the Earth or Galaxy isn’t it. That’s how we’re built, but I can’t imagine machines will be like us.

JWH