by James Wallace Harris, 5/24/23

We’ve known for a long time that we can’t trust what we read. And now with AI-generated art, we must suspect every photo and video we see. I’m even suspicious when the news comes from a scientific journal.

Recent reports claim that AI programs using fMRI (functional magnetic resonance imaging) brain scans can generate text, images, and even videos. In other words, it appears that AI can read our minds. See the paper: “Cinematic Mindscapes: High-quality Video Reconstruction from Brain Activity” by Zijiao Chen, Jiaxin Qing, and Juan Helen Zhou. Even though this is a scientific paper it is readable if you aren’t a scientist, however it is dense and leaves out a lot I would like to know. I hope they make an episode of NOVA on PBS about it because I would love to see how this experiment was done step-by-step.

The press is claiming this means AI technology can read our minds. I’m thinking this is a magic trick and I want to figure out how it works.

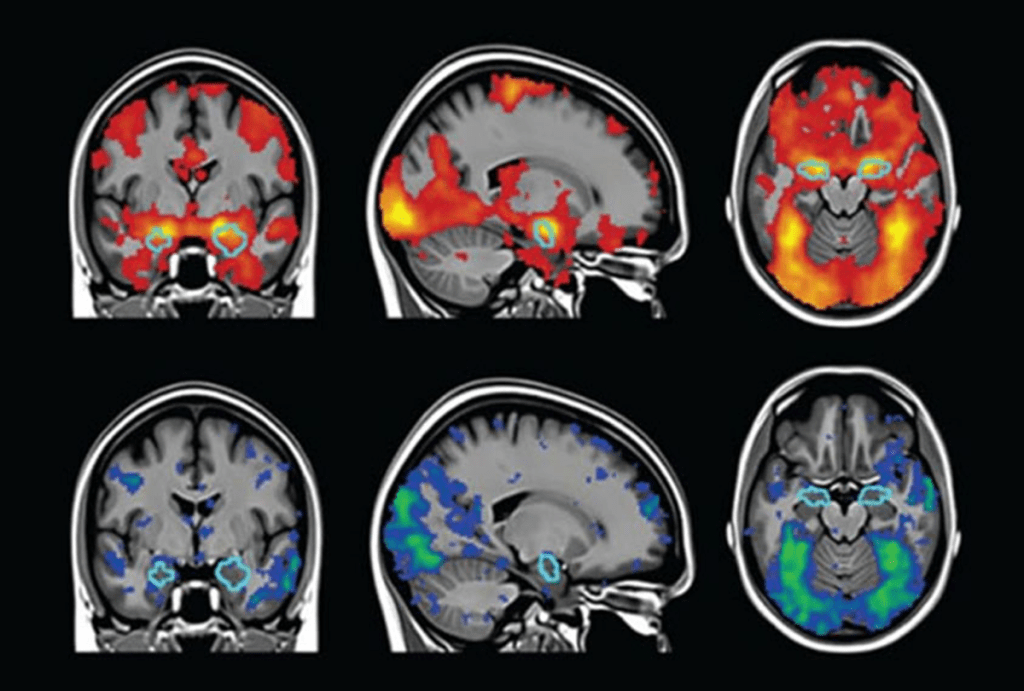

I find this tremendously hard to believe that AI will be able to read our minds. fMRI scans measure blood flow or blood-oxygen-level dependent (BOLD) readings. They look like this:

I just don’t believe there’s enough information in such images to generate an image of what’s in a person’s mind’s eye. However, I thought about how it might be possible to get the results described in this experiment.

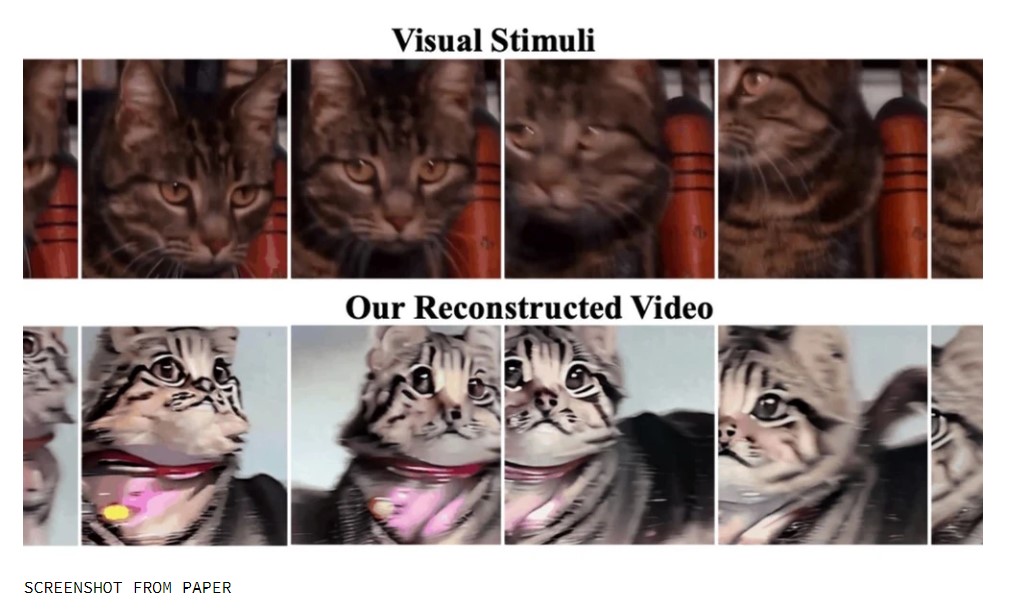

These scientists showed photos or videos to people while being scanned by an fMRI machine. They used AI to analyze the scans and then another AI program, Stable Diffusion, to generate pictures and videos that visually interpret what the first AI program said about the scans.

An analogy is sound recordings to vinyl records. Sound is captured with a microphone and is converted to grooves on a record. Then a record player plays the record and the groves are converted back into sound. If you look at this photo of the grooves in a record it’s hard to imagine it could reproduce The Beatles or Beethoven but they can.

Should we really believe that we can invent a machine that decodes the coding in our brains? For such a machine to work we have to assume our brains are like analog recording devices and not digital. That seems logical. Okay, I can buy that. What I can’t believe is there’s enough information in the fMRI scans to decode. They seem too crude. When we look at a smiling dog and create an image of it in our head, that mental image can have far more details than the patterns we see in the blood flow in our brains. I don’t think they are like the grooves in a record.

What scientists do is show people a picture of a cat, then take a snapshot of the brain’s blood flow pattern. Then they claim their AI program can look at that pattern and know that it’s a cat. Where’s the Rosetta Stone?

I can believe researchers could take ten objects and create 10 fMRI scans and tell a computer what the subject was looking at when scanned. And then test an AI program by taking new scans and having it match up the new scans against the old. But in the above paper, they are claiming they took a series of scans (every 2 seconds) that could generate a video of the movement of a cat’s head. In other words, the fMRI scans give enough information for the subject to have different blood flow patterns depending on the position of the cat’s head.

Even if they perfected this technique it will depend on building a dictionary of fMRI patterns and meanings for every individual. I seriously doubt there’s an engineering standard that works on all humans. Everyone’s brain is different. For an AI to read your brain your brain would have had to be scanned thoroughly and documented. If we showed the same cat photo to a million people, would the fMRI patterns even look close even in a subset of those people? My guess is it will look different for everyone.

The scientists who wrote “Cinematic Mindscapes” used a library of publically available datasets, that included fMRI scans that included 600,000 segments. No matter how much I reread their methodology, I couldn’t understand what they actually did. Since I’ve worked with Midjourney I know how hard it is to get an AI art program to generate a specific image, I’m not sure how they fed Stable Diffusion to get it to generate imagines. If you look at all the examples, it reminds me of how I kept trying to get Midjourney to generate what I visualized in my mind but it always coming up with something different, but maybe close.

My conclusion is AI can’t read minds. But AI can tell the difference between different brain scans which were created with known prompts and get an AI program to generate something similar. But I never could figure out what the prompts were for the Stable Diffusion.

AI programs train on datasets. If the dataset is built from stimulus photos and fMRI scans, how is that any different than training them on photos and text labels. For example. Photo of a smiling dog with the text “smiling dog” and photo of a smiling dog with fMRI scan. If you gave Stable Diffusion the text “smiling dog” it would generate a picture of what it’s learned to be as a smiling dog from thousands of pictures of dogs. Giving the digital data from an fMRI trained the same way Stable Diffusion would produce images of a smiling dog but one it’s learned from training, not the one in the subject’s mind.

Previous fMRI research has shown they can link BOLD patterns with words.

This is not mind reading in the way we normally imagine mind reading. Isn’t it akin to sign language by having the subject’s blood flow patterns make the signs? Real mind reading would be seeing the same smiling dog as the subject saw, and not agreeing on a sign for a smiling dog.

What’s happening here is we’re learning that blood flow in the brain makes patterns and to a degree, we can label them with words or pictures or digital data, but it’s still language translation.

If I say smiling dog to you right now, you can picture a smiling dog, but it won’t be the smiling dog I’m picturing. AI art is based on generalizations about language definitions and translations.

Do we say it’s mind reading if I pictured a smiling dog in my head and then prompted Midjourney with the test: “smiling dog” and it produce a picture of what it thinks is a smiling dog? Sure, my mental image might be of a black pug and Midjourney might produce a black border collie. Close enough. Impressive even. But isn’t it what we do every day with language? We’ve all built a library of images that go with words and concepts, but they aren’t the same as every other person’s library. Language only gets us approximations.

Real mind reading would be if an AI saw exactly what was in my mind.

JWH

In Daniel Suarez’ novels “The Daemon” and “Freedom™”, the daemon uses fMRI to monitor its human agents.

FWIW I consider this truly great Science Fiction. And I will totally serve the daemon if the opportunity presents itself.

https://en.wikipedia.org/wiki/Daemon_(novel_series)

https://en.wikipedia.org/wiki/Freedom%E2%84%A2

My library has Daemon so I’ll give it a try once I finish my current library book on Libby, The Candy House by Jennifer Egan.

addenda: fourth line ought to read: are we back to between today and yesterday, the old adage that the enemy of my enemy is my friend;but assuming this isnt the prelude

I enjoyed reading your post, and it’s interesting that you bring up language in this context. I think phenomenal experience has to be rendered into signs of some sort—language, pictures—in order to be correlated with brain states, yet those signs can only approximate the experience itself. After all, our experience is not really like a movie or photograph or word.

I also think you’re right to be skeptical of the claim that what’s going on here is mind reading. It looks to me like a computer doing the tasks that we tell it to do, forming the correlations that we tell it to form. In other words, an impressive feat, but nothing fundamentally new. There’s so much hyperbole and grossly misleading language surrounding artificial intelligence. Computers are not conscious, intelligent beings like us, and I highly doubt they ever will be unless (we start stretching the meanings of words, as we do), but so many articles are being pumped out lately with titles that would have us believe computers are already conscious and looking to make humanity extinct.

I often think, “Wouldn’t it be nice to see what Geordie (my dog) is dreaming about?” But then I realize the impossibility of that, because artificial intelligence is not really intelligent, it doesn’t really “read” minds. It merely projects back whatever we put in. In order to see Geordie’s dreams projected like a movie, I have to know what he’s dreaming about to begin with, render that ultimately inaccessible 1st person (or 1st dog?) experience into something objective and readable, and then correlate that to his brain states. But what method could I use to get even an approximate of that phenomenal content when he can’t tell me what he’s experiencing? (For that matter, how can I be sure human self-reporting adequately accounts for phenomenal experience?) I could make assumptions based on Geordie’s behavior (all those kicks and ear flaps and barks and nose twitches—Geordie might be more active in his sleep than in waking life!), but that doesn’t sound very scientific to me, especially considering how skeptical scientists tend to be when it comes to animals. I could assume his brain states are like human brain states and go at the correlation starting from that side of things, but again, that’s an assumption I’m not sure scientists could be on board with, at least not without violating their own standards.

I’ve always wished I could experience telepathy so I could know what other people were really like. Knowing another person is like playing the game Minesweeper. You make the best assumptions you can from the evidence you have. But like the game Mastermind, we make a lot of false assumptions.

Once back in the sixties while having an experience with LSD I had a realization of how separate we are as conscious minds. I experienced the sensation of being an island universe and realized other people were their own island universes. And communication was no better than throwing messages in bottles on the sea of space between us.

That’s funny, my LSD experience was the opposite of yours and involved telepathically communicating with my friend’s dog, Blinky. Or at least that was the way it seemed at the time. 🙂

But real life, yes, message in a bottle at times.

My suspicion is this is from a Chinese papermill, and it will be added to the great number of modern science papers that fail to replicate.

Unfortunately, it’s getting a fair amount of notice in the press. People will think it’s possible for AIs to read minds.